Ball Aerospace has been actively developing its TotalSight Flash LiDAR system for the last six years. Flash LiDAR works much like a traditional digital camera, with the sensor taking a range and intensity snapshot with each laser pulse. The LiDAR sensor is coupled with an imaging sensor to provide a real-time color 3D visualization that's useful in military or disaster-response environments for the quick assessment and communication of changed conditions on the ground.

The TotalSight instrument is portable and easily configured, allowing users to swap out context cameras seamlessly depending on conditions or mission objectives.

Capabilities

The current system has a three-degree field of view and an internal pan mirror, which lets users pan the three-degree field of view up to 20 degrees in crosstrack providing a wide field of regard. The laser and camera run up to 30 frames per second, with all processing done onboard before the next data frame is taken. Processing includes fusing data from a context camera (whether visible, short-wavelength infrared (SWIR) or medium-wavelength infrared (MWIR) to the range data from the LiDAR camera to create a full-color 3D point cloud.

A human sees in color, so if you give anybody a color 3D model, they really don't need any training, says Roy Nelson, senior business area manager, Laser Applications, Ball Aerospace. Giving them 3D color, full-motion video is like viewing a video game.

Fuss-less Fusing

A key advantage of the TotalSight Flash system is that the LiDAR uses an array sensor with an X/Y grid of pixels that matches how any context camera operates”all the returns are aligned in a perfect grid that never changes or needs recalibration. This natural alignment is a large part of the reason why the data can be processed in real time.

In this image, the system is mounted to a helicopter, although it also can be used on fixed-wing aircraft as well as UASs.

With a traditional LiDAR scanning system, you have to have all the metadata, all the GPS data, all the mirror positions, for every point, adds Nelson. With a Flash system, you illuminate an area on the ground, so you only need the metadata for that one laser shot that applies to all the points on the array. The data volume is much less.

Rapid Response

During a disaster, the topography often changes, so having before imagery alongside real-time data helps determine the difference between what was and what is.

Some events require rapid response with the need to get to these areas faster, get information faster, so the end user can know how to respond, adds Nelson. The timeliness of data is becoming more important.

The TotalSight system shortens response timelines by quickly communicating conditions, allowing managers to allocate resources quickly to the areas hardest hit.

One of the ˜Holy Grails' that emergency-management people have asked us about for years is the ability to measure, says Thomas D. Burns Sr., chairman and CEO, SP Global Inc., a company that has worked alongside Ball Aerospace on demonstration flights for the National Guard. They can see that trees are down or buildings damaged, but they immediately have to start contracting to get rid of the debris, and they have to calculate how to do that. Flash LiDAR gives you the ability to quantify right away the difference in the height values and be able to assess the size of debris piles. Knowing how much needs to be taken away allows us to plan.

[One-column Sidebar – right side of spread]

SPECIFICATIONS

Sensors

The LiDAR camera comes from Advanced Scientific Concepts. The current generation is a 128 x 128 array that runs at 30 frames per second. That's set to increase to 60 frames per second and 320 x 320 with a next-generation sensor set to hit the market in late 2015. This will result in approximately a 12-times increase in the number of pixels per second. The higher pixel count can be used to increase the field of view or resolution on the ground.

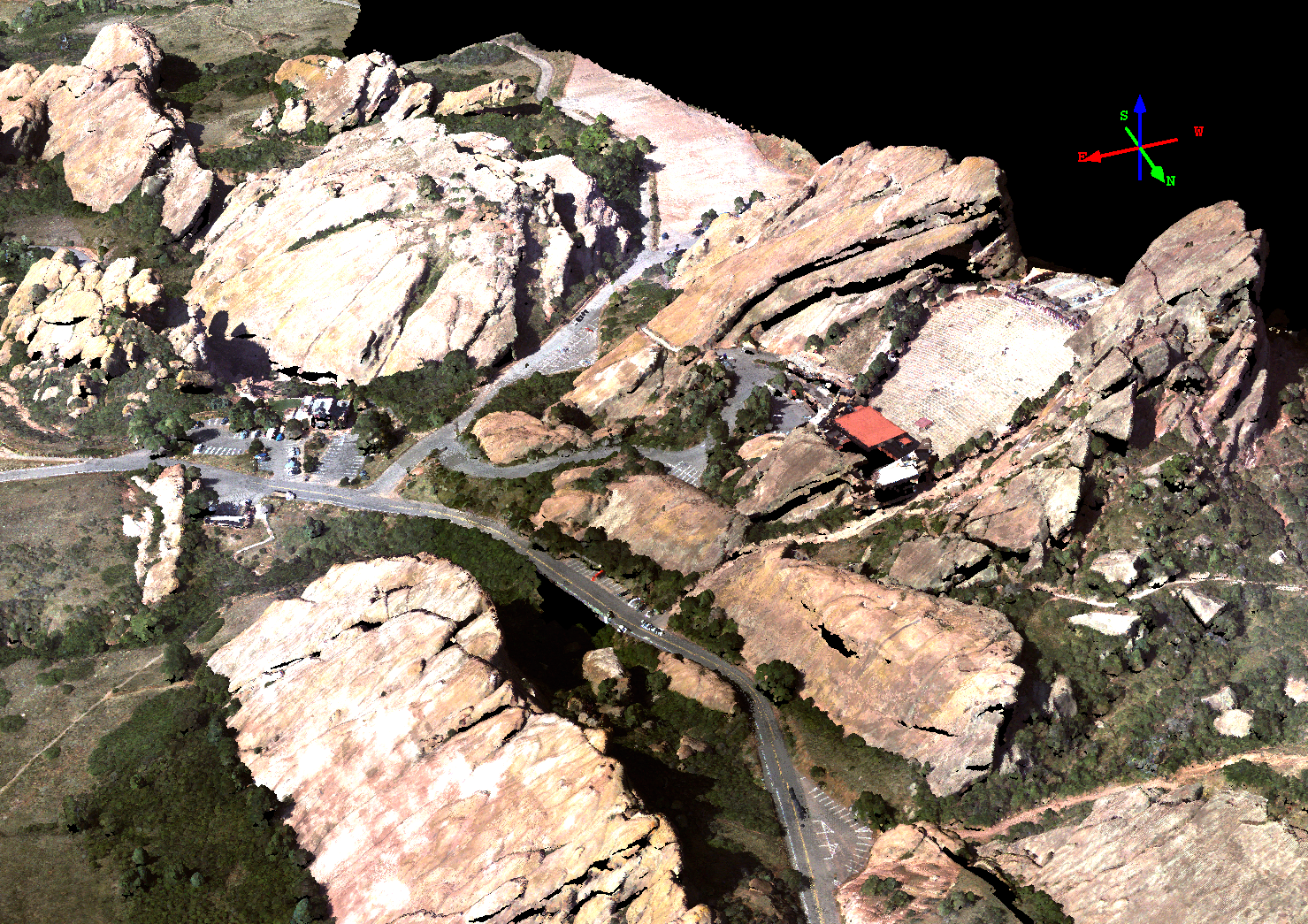

The sensor's 3D data deliverable provides a highly detailed view of current conditions, quickly communicating to a broad range of stakeholders. Here the famous concert venue Red Rocks is pictured.

The context camera can be several different configurations, ranging from a commercial off-the-shelf color visible camera to SWIR/MWIR/LWIR. There are ports for up to two context cameras, giving users a choice of what they would like to fuse with the 3D data. In the infrared range, the sensor starts to pick up thermal signatures, so the system can be useful at night.

Data and Analysis

Receiving color point clouds in real time goes a long way to speed classification. It's easy to identify and quickly classify the ground or trees, as they're easily identifiable, making the extraction of information faster and more reliable as well as visually verifiable.

Downlink

Data rates are compatible with existing RF downlinks.

Accuracy

The system includes a GPS inertial measurement unit that provides location and the airplane's attitude, so data is fully georegistered in near real time. Accuracy depends on the inertial navigation system unit on the plane and the number of GPS satellites acquired, which is somewhat variable, but the range is between 10-50 cm.

Platforms

The TotalSight system has flown on fixed-wing and rotary aircraft and was recently miniaturized to be flown on Insitu's ScanEagle unmanned aircraft system (UAS).

Product Page

VIDEOS:

http://www.youtube.com/watch?v=JwoEfJeHWs8