Following a few simple guidelines can

unlock the cost-saving benefits of collecting

and using LiDAR data.

By Jason Caldwell, director of strategic accounts, and Sanchit Agarwal, director of mapping operations, Sanborn (www.sanborn.com), Colorado Springs, Colo.

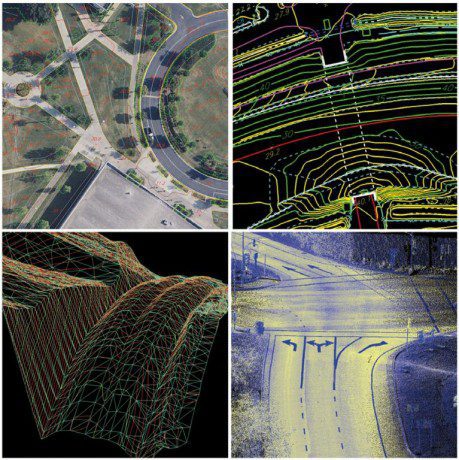

Light detection and ranging (LiDAR) technology has been available for commercial mapping applications for about 20 years. Now it's a cost-effective tool for capturing elevation models”rapid and accurate data acquisition that often makes LiDAR surveying less expensive than traditional photogrammetric methods. Today, virtually all government, engineering, surveying, energy, environmental and natural resource entities recognize the value of using LiDAR for a wide range of applications.

Traditionally, LiDAR has been used for topographic mapping. The Federal Emergency Management Agency (FEMA) was an early adopter of the technology for floodplain modeling, and most organizations using the technology pre-2005 used it exclusively for topographic mapping. Since then, LiDAR users have applied the technology to a wide range of applications, including line-of-sight and viewshed analysis, solar envelope development, vegetation monitoring, habitat analysis, utility easement monitoring, pavement and asset management, volumetric calculations and dam/bridge degradation.

LiDAR's adoption across so many geospatial organizations and applications has increased the need for education about the technology and how it can be applied to business cases around the world. If you're thinking about collecting and using LiDAR data, the ability to answer the following questions will help ensure your project's success.

How will the data be used?

One of the most important aspects of designing a successful LiDAR project is to have a clear picture of what issues the technology is trying to resolve. It's important to have a concise objective of how the data will be used and to clearly communicate that ob jective to all stakeholders, including the LiDAR vendor. Early discovery of the data's intended purpose will help avoid spending energy, time and money for data that don't meet an organization's business needs. New uses for the technology are found every year, but each application should have specific requirements for point density, accuracy and classification level.

Depending on the application, point densities can range from 1 point per 4 meters to 100 points per square meter. Users interested in bare-earth information (1- or 2-foot contours) typically can get away with a lower density, whereas other applications, such as pavement management, probably would require 100 points per square meter.

Data accuracy that supports 2-foot contours has been common since LiDAR first was made commercially available. For years, FEMA has required accuracies that support 2-foot contours, or 18.5-cm root-mean-squared error (RMSE). During the last five years, the technology, hardware, software and supporting sensor technology have allowed LiDAR to support 1-foot contour accuracy. However, some applications require even greater accuracy. Such applications may require a nonfixed-wing approach. Accuracies as great as 3-cm vertical can be achieved and may be needed for applications such as utility asset management and design-build applications for pipelines, roads and bridges.

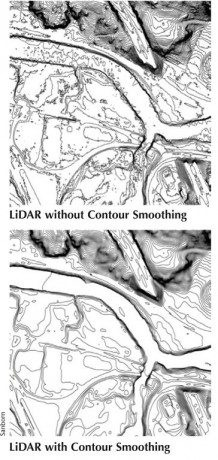

Contour smoothing eliminates vertices within the contour vector dataset to lower the overall file size and allow for a more aesthetically pleasing product.

What's the interval and accuracy of contours? How does smoothness of contours affect accuracy?

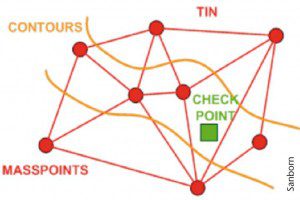

Contour datasets are a common LiDAR deliverable. It's important to understand that contours are derived from the LiDAR bare-earth surfaces. As a result, the contour vector lines developed from LiDAR data are only as accurate as the input data used to define the contours, so density and accuracy of the LiDAR bare-earth points are what should be considered when defining the accuracy and interval of the contour delivery. According to American Society for Photogrammetry and Remote Sensing (ASPRS) standards, 2-foot contour accuracy must meet 0.67-foot RMSE, and 1-foot contour accuracy must meet 0.34-foot RMSE.

Contour smoothing is the process of eliminating vertices within the contour vector dataset to lower the overall file size and allow for a more aesthetically pleasing product. LiDAR-based contours have many more vertices than traditional photogrammetrically processed contours. This issue was significant a few years ago. However, as LiDAR software packages have advanced, file sizes of the LiDAR-derived contour datasets are less of an issue. Furthermore, many end users no longer use contours within the business process chain; instead, they use surfaces or triangulated irregular networks (TINs).

Regardless, some customers desire a contour product that has a higher aesthetic quality”i.e., less noise. Several methods can be used to develop a smoother-looking product, but the user and data provider must balance the smoothing process with accuracy considerations. LiDAR processing software can compress the points used as input for contour development. This process uses Model Key Points within the surface for input when creating the contours. The remaining non-Model Key Points of the LiDAR bare-earth points are

eliminated from the process. Sophisticated algorithms are used to intelligently select Model Key Points at appropriate locations, such as a flat area in a parking lot. Fewer points are required to accurately define a parking lot than a river corridor.

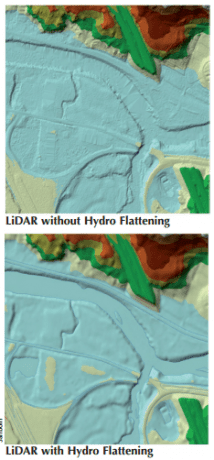

Hydro flattening is becoming a standard requirement for most LiDAR projects that include surface applications such as floodplain modeling.

Do I need hydro flattening? Can I define my own parameters for hydro enforcement?

Hydro flattening is becoming a standard requirement for most LiDAR projects that include surface applications such as floodplain

modeling. For applications that require hydrography/hydraulics (lakes and rivers) to be flat and flow downhill, raw or filtered LiDAR data often are unsuitable. Due to systematic errors and failure to penetrate vegetation near streams, contours generated from LiDAR-derived TINs can show water flowing uphill. In addition, automatic filtering typically doesn't cut the terrain to allow water to flow through culverts or low-lying bridges.

Hydrological rules are imposed in two ways. First, open water bodies are forced to be flat and horizontal. This eliminates contours that stray into lakes from riverbanks. Second, operators can add break lines to force culverts, drains and small bridges to be cut according to required hydrological rules.

The U.S. Geological Survey (USGS) has created specifications that define parameters for hydro flattening. The features are defined as inland ponds and lakes with surface areas larger than 2 acres and inland streams and rivers with 100-foot nominal width. The parameters USGS uses to define minimum size for lakes and streams may not apply to all regions. As a result, the agency allows custom definitions for hydro enforcement minimum sizes. For the most part, anywhere in the noncoastal regions of the western United States will require custom definitions for hydro enforcement due to the limited water bodies found within the region.

What level of point classification do I need?

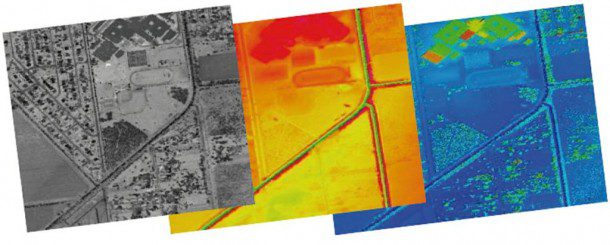

For years, LiDAR data were delivered as a bare-earth digital elevation model (DEM) in ASCII format; however, LiDAR-derived elevation products contain much more information than just the bare-earth points. Most users had limited knowledge of how to apply the nonground points, and most software was unable to render nonterrain points.

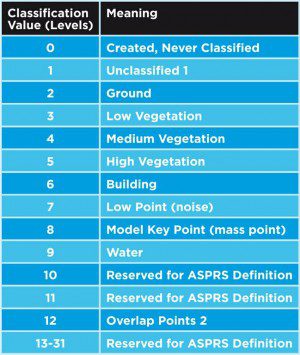

Several years ago, ASPRS drafted a classification schema that provided a standardized classification system. Now the ASPRS standard most users define in a scope of work is either LAS 1.2 or LAS 1.3. The table at right documents the LAS 1.3 classification schema. The amount of classes used within the schema is user defined. For example, one user may define the classification as ground and unclassified while another user prefers to see the unclassified further classified to additional levels, including vegetation, buildings and water. The LAS 1.3 classification schema also allows users to further customize the schema with additional levels; these can be classified in 13-31 levels. In general terms, the more classification levels used, the greater the cost.

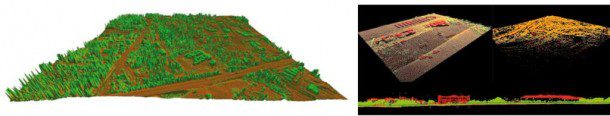

LiDAR classification is a two-step process. The initial LiDAR point-cloud classification is performed using automated macros designed to classify points to the project's required classes. The macros are specific for each type of geography within a project, taking into consideration terrain relief; ground cover; natural and man-made features, including rivers, streams, lakes, ponds, swamps, marshes and urban landscapes; and developments such as buildings and bridges. The macros exploit the information related to the number of returns of a pulse, elevation, slope, and height from ground and other terrain characteristics to classify the point cloud automatically.

LiDAR classification is a two-step process. The initial LiDAR point-cloud classification is performed using automated macros designed to classify points to the project's required classes. The macros are specific for each type of geography within a project, taking into consideration terrain relief; ground cover; natural and man-made features, including rivers, streams, lakes, ponds, swamps, marshes and urban landscapes; and developments such as buildings and bridges. The macros exploit the information related to the number of returns of a pulse, elevation, slope, and height from ground and other terrain characteristics to classify the point cloud automatically.

Following the automated classification process, a supervised or manual classification occurs. A team of editors reviews the tiles to ensure the points are classified correctly. 3-D tools include cross-section or profile views of points to aid classification. Surface model visualization with rapid contour development is used to spot bare-earth blunders for re-classification. Color triangles display TINs, colored grids provide shaded relief, and other sophisticated visualization tools support the manual classification.

The image on the left shows a simple ground (brown) and vegetation (green) classification; the image on the right also includes buildings (red).

Due to the engaging nature of the LiDAR manual classification, it's one of the most labor-intensive (and hence most expensive) parts of the LiDAR production workflow. The required level of classification depends on the intended use of the LiDAR data. For example, if the LiDAR data are to be used only for determining ground elevation, there's no need to classify buildings and vegetation in separate classes. On the other hand, if the LiDAR data are supposed to be used for vegetation analysis, the vegetation classification becomes a natural requirement. Some of the most common classification levels are detailed in the table above.

How can I confirm data quality and accuracy, and how many survey points do I need to check accuracy?

There are several ways to measure LiDAR data's quality and accuracy. Point density, spatial accuracy and classification accuracy are three things that can be sampled to confirm overall data quality.

To measure vertical accuracy, independently surveyed checkpoints are used for accuracy assessment and should be collected as close to where the LiDAR data were acquired as possible. The accuracy of the checkpoints should exceed the accuracy of the LiDAR data. For standard-size projects, such as a city or countywide areas, the assessment should use at least 20 vertical checkpoints. Checkpoints should be located in areas of open and flat terrain where slopes don't exceed 20 percent for 5 meters in all directions.

FEMA further defined how accuracy can be assessed within varying land cover found within a project area”use at least 60 checkpoints split into three land cover classes. However, depending on the project area, more checkpoints may be required based on additional land cover categories found within the project's location.

FEMA defines land cover classes as follows:

1. Bare earth and low grass (e.g., plowed fields, lawns, golf courses)

2. High grass, weeds and crops (e.g., hay fields, corn fields, wheat fields)

3. Brush lands and low trees (e.g., chaparrals, mesquite)

4. Forested, fully covered by trees (e.g., hardwoods, evergreens, mixed forests)

5. Urban areas (e.g., high, dense manmade structures)

6. Sawgrass

7. Mangrove

When should I consider completing a LiDAR acquisition?

Several factors influence when LiDAR data should be acquired.

Day or night?

LiDAR systems are active sensors, so flights can be conducted day or night.

Nighttime acquisitions typically offer some benefits over daytime acquisitions because there is much less turbulence in the air and clutter (e.g., vehicles) on the ground. However, requiring flights to be conducted only at night will lengthen the acquisition schedule and increase cost.

Does weather matter?

Ground and air conditions during acquisition can affect the quality of the final product and limit the acquisition's success. The conditions on the ground and between the laser and the ground should be reviewed prior to data acquisition. Data acquisition should be avoided if there's any fog, smoke or high levels of moisture (rain, snow, clouds, etc.). Additionally, if snow is on the ground or a large amount of standing water (flooding) is apparent, then data shouldn't be acquired. LiDAR data reflect well off snow, so snow accumulation will bias the ground elevation. However, there could be applications for measuring snow pack. One would need to have accurate ground elevat ion, which could be compared with data collected with the snow pack, to provide a depth calculation. Standing water causes significant issues for LiDAR, as most standing water will not reflect the laser pulses well.

What's the best time of year?

Intended use will affect the time of year the LiDAR data are acquired. Outside of ground conditions dictated by weather, vegetation is the primary concern. LiDAR technology doesn't penetrate vegetation; however, LiDAR data can filter to the ground through the vegetation as long as light can be seen from under the tree canopy. Regardless, if the intended use of the LiDAR data is for contour mapping, hydrography/hydrologic assessment or even building extraction, then a leaf-off approach will result in better data. If the intended use of the data is for vegetation modeling of any kind, then a leaf-on flight is probably a better choice. There are many areas in the United States that don't have leaf-off conditions; regardless, LiDAR is still the best technology for generating ground surfaces in these areas.

Are there special considerations for coastal communities?

Users in coastal communities may be interested in completing LiDAR acquisitions during a particular tidal event, typically low tide, to measure as much of the ground as possible due to traditional LiDAR sensors not being able to penetrate water.

What software should I use to visualize and manipulate the data?

Most of the LiDAR system manufacturers have kept themselves distant from offering software solutions and workflows for LiDAR data processing. Hence until the last few years, LiDAR vendors were using custom software and workflows to process LiDAR data to final usable products.

As LiDAR technology became mainstream, a handful of software companies started offering end -to-end, off-the-shelf solutions for processing the data through the workflow. Due to the mathematical complexity of the LiDAR boresite and calibration processes, software choices for LiDAR calibration remain limited. However, there are a variety of packages and tools available for visualizing and manipulating LiDAR data in innovative ways (see Stereo LiDAR Data Collection Boosts Productivity below and the LiDAR Solutions Showcase at the bottom of the Earth Imaging Journal home page). Realizing the extent of the technology's adaptation, Esri started supporting LAS files (LiDAR format) as a native format in the latest version of its ArcGIS software.

Moreover, with recent hardware and electronic breakthroughs, new-generation LiDAR sensors can collect data at incredible efficiencies and exponential densities. The software that supports such LiDAR data production, manipulation and visualization tasks is trying to catch up with the pace of the technology. Although there are

expectations from the user community for vendors to dish out LiDAR products on Web/cloud-based platforms, few applications have been successful in offering LiDAR data services on the web. However, there are some promising applications using graphics processing unit (GPU) -based processing systems.

expectations from the user community for vendors to dish out LiDAR products on Web/cloud-based platforms, few applications have been successful in offering LiDAR data services on the web. However, there are some promising applications using graphics processing unit (GPU) -based processing systems.

What type of data formats/deliveries should I request? What file formats are preferred?

A good understanding of the intended use of the LiDAR data lays the foundation for putting together the list of desired deliverables from the LiDAR vendor. It's also important to have a good understanding of various LiDAR software packages, the file formats supported by the software, file-size limitations, etc. Depending on the scope of work, a typical LiDAR product offering can be divided into the following six categories:

1. LiDAR formats (random spacing)

– LAS “ Can range from LAS 1.1 to LAS 1.4 formats

– Ascii – can include x,y,z and any other LAS attributes

– Esri Shapefile, Esri TIN

2. Raster DEM formats (regular spacing/gridded)

– Arc Binary GRID (also known as ArcInfo Lattice)

– Arc Ascii GRID

– ERDAS img

3. Imagery

– Intensity images (any raster format)

– Variety of rendering types (hillshade, slope, aspect, etc.)

4. Contours

– GIS format (e.g., Esri Shapefile, Esri Personal Geodatabase)

– CAD formats (e.g., DXF, DGN, DWG)

5. Breakline/planimetric data

– GIS formats (e.g., Esri Shapefile, Esri Personal Geodatabase)

– CAD formats (e.g., DXF, DGN, DWG)

6. Metadata and reports

– FGDC-compliant metadata for all the products

– Survey report

– Vertical accuracy report, calibration report

As LiDAR makes its way into an ever-increasing variety of applications, some nonconventional specific products and analysis might be required as deliverables.

What's the tile size for the deliverables?

Many users over the years have been excited to receive LiDAR data with immediate needs for data applications. W ith the increase in the density of the LiDAR point clouds, the file sizes of all the products have increased exponentially over the years. However, common information technology (IT) infrastructure and software may hinder the ability to work with such large file sizes. Currently, off -the-shelf software is becoming more advanced and is able to handle the larger file sizes better. Moreover, storage costs are a fraction

of what they were 10 years ago.

Although often overlooked, the need for an appropriate IT infrastructure should be accounted for in a project's planning phases to avoid any last-minute surprises. Also, there needs to be a comprehensive plan for disseminating the data to all the project's stakeholders. For example, one of the components of the planning process is to realize that all the stakeholders might not need the full portfolio of products. Another item to consider is that all the participating groups might not need the LiDAR data and products at full resolution. Here is an example of the file size of a 1-square-mile tile at 2 points per square meter:

LAS File Size: 248,408 KB

ASCII All Points Size: 292,766 KB

These file sizes don't include products beyond the LiDAR points; most users request additional products such as hill shades, contours, gridded DEMs, etc. The sizes of the additional products also should be considered for planning purposes. For a typical LiDAR project, the rule of thumb is that all the final product deliveries take about 4-6 times the total size of the LAS deliverables. With higher-density LiDAR systems (50-100 points per square meter), adjust the tile sizes to keep them manageable.

What parameters affect cost?

Many parameters affect cost, but they can be separated into two distinct categories: client specifications and earth geography.

Area size and shape will affect cost. The larger the project area the greater the overall cost; there are economies of scale, so the overall per-square-mile cost can be reduced as the area size grows, although the overall total cost will be greater. Additionally, the shape of the area affects cost. A large regular-shaped area, such as a square or rectangle, will be more efficient to collect than multiple smaller areas or an irregular-shaped area such as a corridor.

Point density can drastically affect cost. In general terms, the greater the point density the greater the cost.

Accuracy specifications are somewhat related to point density. The greater the accuracy requirement the greater the density of points required. Again, in general terms, the higher the level of accuracy required the greater the cost.

The level of point classification can affect cost. A request that simply requires processing for ground and nonground data only will see a lower overall cost than a requirement for classifying ground, vegetation, structures and hydrography.

Land cover characteristics can also affect cost, especially when classification specifications require structures. A dense ur ban area is more difficult and takes more time to process than a rural area.

Weather plays a large role in terms of cost. As discussed previously, LiDAR shouldn't be flown when rain, fog, and/or smoke e xists between the sensor and the target. LiDAR vendors review historical weather statistics to determine estimated weather factors that correspond directly to estimated no-fly days. Depending on the project's location, the user has limited control over this factor. Each geographical region may offer times of the year that are better than others. As a result, if the user dictates a time of the year that has preferred weather, the collection may allow for a lower cost compared with a time of the year that has inclement weather.

Terrain will affect cost. The faster the terrain changes within a project area, the more difficult it becomes to maintain a specific point density and accuracy. As a result, more flight lines typically are required, which adds cost.

Special parameters that restrict flight times also add cost. Tidal flight requirements are a good example. The more restrictions placed on the acquisition parameters the longer the data acquisition will take, which increases cost.

Stereo LiDAR Data Collection Boosts Productivity

By Jane Smith, Cardinal Systems (www.cardinalsystems.net), Flagler Beach, Fla.

Mapping companies have been adding light detection and ranging (LiDAR) technology to their tool box for years to aid applications such as power line mapping, dense vegetation mapping, pipeline surveys and even high-accuracy highway mapping. Little did they know what a perfect mix LiDAR and traditional photogrammetry would become.

A few years ago, many dyed-in-the wool photogrammetrists probably would agree that LiDAR data were impressive. Massive amounts of data were found resting on the ground.

But as users began to zoom into the data in 3-D, using photographic stereo models as a backdrop, accuracy limitations caused by noise and crude bare-earth processing became apparent. The datasets were hard to deal with, and traditional data collection methods over LiDAR point clouds were slow and cumbersome.

Now software breakthroughs in LiDAR feature extraction have accelerated the quantity and increased the quality of data collected. Less time is spent collecting and editing the data. Digitizing in true 3-D stereo allows data to be collected more accurately, as stereo's fundamental ability to see the data from any viewpoint, origin or scale is critical to placing accurate points on the ground.

Routines have been created within the stereo environment to semi-automate feature extraction, such as cursor draping and high- and low-point search. Cursor draping holds the cursor to the LiDAR surface in the z axis as the cursor is moved in the 3-D viewing space. High-and low-point search is typically used to find the highest and lowest point in a specific area of a point cloud, such as the top of a curb and the bottom of a gutter.

LiDAR software development has focused on achieving greater efficiency and streamlining the mapping process. The workflow constantly changes, but the deliverable often is the same. The challenge is to integrate steps that will achieve the same results faster.

Applying new routines, while viewing data stereoscopically, improves vector accuracy and instant point cloud quality control. Adopting more advanced methods of feature extraction is critical for gaining a competitive edge in today's geospatial marketplace.