How can a forest be red and a cloud blue? It depends on the processes used to transform satellite measurements into images.

Natural- and false-color images from NASA's MESSENGER mission to Mercury show plant-covered land from the Amazon rainforest to North American forests.

In our photo-saturated world, it's natural to think of the images on NASA's Earth Observatory website as snapshots from space. But most aren't. Though they may look similar, photographs and satellite images are fundamentally different. A photograph is made when light is focused and captured on a light-sensitive surface such as film or a charge-coupled device in a digital camera. A satellite image is created by combining measurements of the intensity of certain wavelengths of light that are visible and invisible to human eyes.

Why does the difference matter? When we see a photo where the colors are brightened or altered, we think of it as artful (at best) or manipulated (at worst). We also have that bias when we look at satellite images that don't represent the Earth's surface as we see it. That forest is red, we think, so the image can't possibly be real.

In reality, a red forest is just as real as a dark green one. Because satellites collect information beyond what human eyes can see, images made from other wavelengths of light look unnatural to us. We call these false-color images. To understand what they mean, it's necessary to understand exactly what a satellite image is.

Satellite instruments gather an array of information about Earth. Some of it is visual, some of it is chemical and some of it is physical. In fact, remote sensing scientists and engineers are endlessly creative about what they can measure from space, developing satellites with a wide variety of tools to tease information out of our planet. Some methods are active, bouncing light or radio waves off Earth and measuring the energy returned; light detection and ranging (LiDAR) and radar technology are good examples. But the majority of instruments are passive; that is, they record light reflected or emitted by Earth's surface.

These observations can be turned into data-based maps that measure everything from plant growth or cloudiness. But data also can become photo-like natural-color images or false-color images. It all depends on the process used to transform satellite measurements into images.

Seeing the Light

So what does a satellite imager measure to produce an image? It measures light we see and don't see. Light is a form of energy”also known as electromagnetic radiation”that travels in waves. All light travels at the same speed, but the waves aren't all the same. The distance between the top of each wave”the wavelength”is smaller for high-energy waves and longer for low-energy waves.

Visible light comes in wavelengths of 400 to 700 nanometers, with violet having the shortest wavelengths and red having the longest. Infrared light and radio waves have longer wavelengths and lower energy than visible light, while ultraviolet light, X-rays and gamma rays have shorter wavelengths and higher energy.

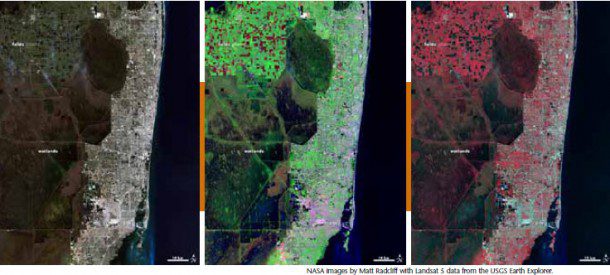

The natural-color image at left shows southeast Florida in red, green and blue light. The false-color image in the middle combines SWIR, NIR and green light. At right Southeast Florida is shown in NIR, red and green light.

Most of the electromagnetic radiation that matters for Earth-observing satellites comes from the sun. When sunlight reaches Earth, the energy is absorbed, transmitted or reflected. Every surface or object absorbs, emits and reflects light uniquely depending on its chemical makeup. Chlorophyll in plants, for example, absorbs red and blue light but reflects green and infrared; this is why leaves appear green. This unique absorption and reflection pattern is called a spectral signature.

Like Earth's surfaces, gases in the atmosphere also have unique spectral signatures, absorbing some wavelengths of electromagnetic radiation and emitting others. Gases also let a few wavelengths pass through unimpeded. Scientists call these atmospheric windows for specific wavelengths, and often satellite sensors are tuned to measure light through these windows.

Some satellite instruments also directly measure the energy emitted by objects. Everything gives off energy, usually in the form of heat (thermal infrared radiation). The hotter an object is, the shorter the peak wavelength it emits. At about 400°C (750° F)”the temperature of an electric stove burner set to high”the emitted light will begin to be visible. The colder an object is, the longer the peak wavelength it emits.

Turning Wavelength Data into an Image

Satellite instruments carry many sensors, each tuned to a narrow range, or band, of wavelengths. Viewing the output from just one band is a bit like looking at the world in shades of gray. The brightest spots are areas that reflect or emit a lot of that wavelength of light, and darker areas reflect or emit little”if any.

To make a satellite image, researchers choose three bands and represent each in tones of red, green or blue. Because most visible colors can be created by combining red, green and blue light, the red, green and blue images are combined to get a full-color representation of the world.

A natural or true-color image combines actual measurements of red, green and blue light. The result looks like the world as humans see it.

A false-color image uses at least one nonvisible wavelength, though that band is still represented in red, green or blue. As a result, the colors in the final image may not be what you expect them to be. For example, grass isn't always green. Such false-color band combinations reveal unique aspects of the land or sky that might not be visible otherwise.

The series of Landsat images above of southeastern Florida and the Northern Everglades illustrates why you might want to see the world in false color. The natural-color image shows dark green forest, light green agriculture, brown wetlands, silver urban areas (the city of Miami), and turquoise offshore reefs and shallows. These colors are similar to what you would see from an airplane.

The second image shows the same scene in green, near-infrared (NIR) and shortwave-infrared (SWIR) light. In this false-color band combination, plant-covered land is bright green, water is black and bare earth ranges from tan to pink. Urban areas are purple. Newly burned farmland is dark red, while older burns are lighter red. Much of the farmland in this area is used to grow sugar cane. Farmers burn the crop before harvest to remove leaves from the canes. Because burned land looks different in this kind of false-color image, it is possible to see how extensively farmers rely on fire in this region.

This false-color view also reveals how water flows through the Northern Everglades. Green islands punctuate the wetlands, which are black and blue. These are tree islands that are hard to distinguish in natural color. Their orientation aligns with the flow of the water, highlighting direction that isn't obvious in the natural-color image. It is also easier to see the extent of the wetlands against surrounding land, because water is dark in this view, and plant-covered land is bright green.

The third image shows the scene in green, red and NIR light. Plants are dark red, because they reflect infrared light strongly, and the infrared band is assigned to be red. Plants that are growing quickly reflect more infrared, so they are brighter red. That means this type of false-color image can help us see how well plants are growing and how densely vegetated an area is. Water is black and blue, and urban areas are silver.

Observing in Visible Light

Data visualizers and remote sensing scientists make true- or false-color images to show the features in which they're most interested, and they select the wavelength bands most likely to highlight those features. Blue light (450 to 490 nanometers) is among the few wavelengths that water reflects”the rest are absorbed. Hence, blue bands are useful for seeing water surface features and for spotting the floor of shallow water bodies.

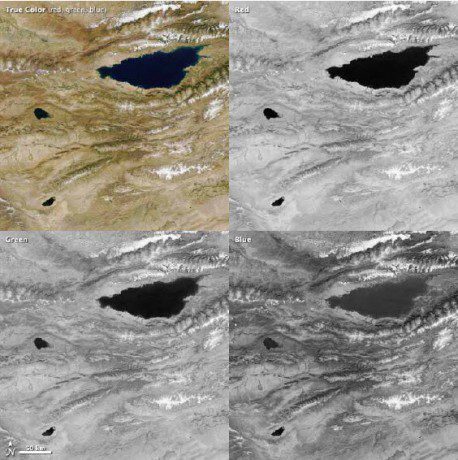

Combining red, green and blue bands results in a true-color image, such as this view of Lake Issyk Kul, Kyrgyzstan.

You can see that water reflects some blue light in the image of Lake Issyk Kul, Kyrgyzstan, at right. Water is lighter in the blue band than it is in either the red or green bands, though the lake is too deep for shallow features to be visible. Manmade creations like cities and roads also show up well in blue light. It is also the wavelength most scattered by particles and gas molecules in the atmosphere, which is why the sky is blue.

Green light (490 to 580 nanometers) is useful for monitoring phytoplankton in the ocean and plants on land. The chlorophyll in these organisms absorbs red and blue light but reflects green light. Sediment in water also reflects green light, so a muddy or sandy body of water will look brighter because it is reflecting both blue and green light.

Red light (620 to 780 nanometers) can help distinguish minerals and soils that contain a high concentration of iron or iron oxides, making it valuable for studying geology. Because chlorophyll absorbs red light, this band commonly is used to monitor the growth and health of trees, grasses, shrubs and crops. Also, red light can help distinguish between different types of plants on a broad scale.

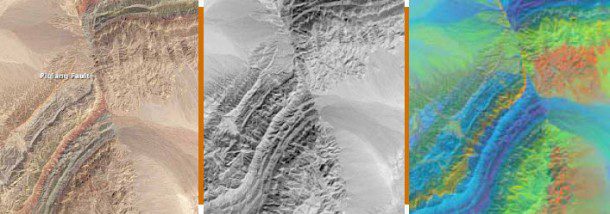

The Operational Land Imager on the Landsat 8 satellite captured the view at left of the Piquiang Fault in northwestern China. The colors reflect rocks that formed at different times and in different environments. Variations in mineral content, vegetation, and water cause patterns of light and dark in the NIR view in the middle. At right, comparing the differences among three SWIR bands highlights the mineral geology surrounding the fault.

NIR, red and green light were used to create the false-color image of Algeria at left. Red, plant-covered land dominates the scene. The SWIR, NIR and green light version of the Algeria scene at right highlights the presence of water and wet soil in an otherwise dry landscape.

Observing in Infrared

NIR light includes wavelengths between 700 and 1,100 nanometers. Water absorbs NIR, so it is useful for discerning land-water boundaries that aren't obvious in visible light. The images above show a natural-color, NIR and SWIR view of China's Piqiang Fault. Streambeds and the wetland in the upper left corner are darker than the surrounding arid landscape because of their water content. Plants, on the other hand, reflect NIR light strongly, and healthy plants reflect more than stressed plants. In addition, NIR light can penetrate haze, so including this band can help discern the details in a smoky or hazy scene.

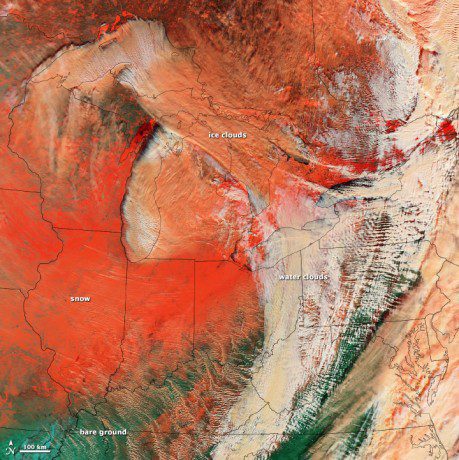

SWIR light includes wavelengths between 1,100 and 3,000 nanometers. Water absorbs SWIR light in three regions: 1,400, 1,900 and 2,400 nanometers. The more water there is, even in soil, the darker the image will appear at these wavelengths. This means SWIR measurements can help scientists estimate how much water is present in plants and soil. SWIR bands are also useful for distinguishing between cloud types (water clouds vs. ice clouds) and between clouds, snow and ice”all of which appear white in visible light.

Newly burned land reflects strongly in SWIR bands, making them valuable for mapping fire damage. Active fires, lava flows and other extremely hot features glow in the SWIR part of the spectrum. In the Piqiang Fault images on page 32, different types of sandstone and limestone make up the mountains around the fault. Each rock type reflects SWIR light differently, making it possible to map out geology by comparing reflected SWIR light. Enhancing the subtle differences among the three bands of reflected SWIR light gives each mineral a distinctive, bright color.

Midwave-infrared (MIR) light ranges from 3,000 to 5,000 nanometers and is used most often to study emitted thermal radiation at night. MIR energy also is useful in measuring sea-surface temperature, clouds and fires.

Infrared light”specifically between 6,000 to 7,000 nanometers”is critical for observing water vapor in the atmosphere. Though water vapor makes up just 1-4 percent of the atmosphere, it is an important greenhouse gas. It is also the basis for clouds and rainfall. Water vapor absorbs and re-emits energy in this range, so infrared satellite observations can be used to track water vapor. Such observations are integral to weather observations and forecasts.

Thermal- or longwave-infrared light includes wavelengths between 8,000 and 15,000 nanometers. Most of the energy in this part of the spectrum is emitted (not reflected) by Earth as heat, so it can be observed both day and night. Thermal-infrared radiation can be used to gauge water and land surface temperatures; this makes it particularly useful for geothermal mapping and detection of heat sources like active fires, gas flares and power plants. Scientists also use thermal-infrared light to monitor crops. Actively growing plants cool the air above them by releasing water through evapotranspiration, so thermal-infrared light helps scientists assess how much water the plants use.

How to Interpret Common False-Color Images

Though there are many possible combinations of wavelength bands, NASA's Earth Observatory typically selects one of four combinations based on the event or feature to be illustrated. For example, floods are best viewed in SWIR, NIR and green light because muddy water blends with brown land in a natural-color image. SWIR light highlights the differences among clouds, ice and snow”all of which are white in visible light.

The site's four most common false-color band combinations are:

1. NIR (red), green (blue) and red (green), which is a traditional band combination used to see changes in plant health.

2. SWIR (red), NIR (green) and green (blue), which is a combination often used to show floods or newly burned land.

3. Blue (red) and two different SWIR bands (green and blue), which is a combination used to differentiate among snow, ice and clouds.

4. Thermal infrared, which usually is shown in gray tones to illustrate temperature.

NIR, Red and Green

One of the site's most frequently published combinations uses NIR light as red, green light as blue and red light as green. In this case, plants reflect NIR and green light and absorb red. Because they reflect more NIR than green, plant-covered land appears deep red. The signal from plants is so strong that red dominates the left false-color view of Algeria above. Denser plant growth is darker red. This band combination is valuable for gauging plant health.

Cities and exposed ground are gray or tan, and clear water is black. In the Algeria image, the water is muddy, and the sediment reflects light. This makes the water look blue. Images from the Advanced Spaceborne Thermal Emission and Reflection Radiometer (ASTER) and from the early Landsats often are shown in this band combination because that's what the instruments measured.

SWIR, NIR and Green

The most common false-color band combination on the Earth Observatory website uses SWIR (shown as red), NIR (green) and the green visible band (shown as blue). Water absorbs all three wavelengths, so it is black in this band combination.

In the right false-color image of Algeria above, however, water is blue because it is full of sediment. Sediment reflects visible light, which is assigned to look blue in this band combination. This means sediment-laden water and saturated soil will appear blue. Because water and wet soil stand out in this band combination, it is valuable for monitoring floods. Saturated soil also will appear blue. Clouds, snow and ice are bright blue, because ice reflects visible light and absorbs infrared. This helps distinguish water from snow and ice; it also distinguishes clouds made up mostly of liquid water or ice crystals.

Newly burned land reflects SWIR light and appears red in this combination. Hot areas, such as lava flows or fires, are also bright red or orange. Exposed, bare earth generally reflects SWIR light and tends to have a red or pink tone. Urban areas are usually silver or purple, depending on the building material and how dense the area is.

Because plants strongly reflect NIR light, vegetated areas are bright green. The signal is so strong that green often dominates the scene. Even the sparse vegetation in Algeria's desert landscape stands out as bright green spots in the image.

Blue, SWIR

Occasionally, Earth Observatory will publish a band combination that assigns blue light to be red and two different SWIR bands to green and blue. As shown in the Great Lakes image below, this band combination is especially valuable in distinguishing snow, ice and clouds. Ice reflects more blue light than snow or ice clouds. Ice on the ground will be bright red in this false color, while snow is orange, and clouds range from white to dark peach.

Thermal Infrared

A combination of blue and SWIR light contrasts clouds, snow and ice in a large winter storm over the Great Lakes in January 2014.

Earth Observatory also uses thermal infrared measurements to show land temperatures, fire areas or volcanic flows, but these are published as grayscale images most of the time. Occasionally, the thermal features of interest will be layered on top of a true-color or grayscale image, particularly in the case of a fire or volcano, as shown in the image above.

Experiment with Different Band Combinations

You can explore the way different band combinations highlight different features by using a browse tool called Worldview (https://earthdata.nasa.gov/labs/worldview), which displays data from many different imagers. Click on add layers, and then select one of the alternate band combinations (1-2-1, 3-6-7 or 7-2-1).

You can also explore false-color imagery with Landsat. A few examples are shown in the Landsat 7 Compositor (http://compositor.gsfc.nasa.gov). In addition, you can make your own Landsat images and experiment with band combinations by using software like Adobe Photoshop or ImageJ. Download data for free from the U.S. Geological Survey as detailed at http://earthobservatory.nasa.gov/blogs/elegantfigures/2013/05/31/a-quick-guide-to-earth-explorer-for-landsat-8/, then follow the instructions for Photoshop (http://earthobservatory.nasa.gov/blogs/elegantfigures/2013/10/22/how-to-make-a-true-color-landsat-8-image/) or ImageJ (http://landsat.gsfc.nasa.gov/wp-content/uploads/2013/05/Make-Your-Own-

Landsat-Image-Tutorial.pdf).

Acknowledgements

Thanks to the following science reviewers and/or content providers: Michael King, Vincent Salomonson, David Mayer, Patricia Pavon and Belen Franch.