The Top 10 Considerations for Buying and Using Synthetic Aperture Radar Imagery

By William V. Parker, principal, Global Engagement Solutions, and senior associate, PACE Government Services (http://pacegs-dc.com), Washington, D.C.

The last two decades have witnessed unprecedented growth in the satellite-based Earth observation industry. Although the market is still strongly biased toward electro-optically derived imagery, a rising tide of acceptance and usage of satellite-derived synthetic aperture radar (SAR) data has occurred during the last few years. This trend is the result of the increasing availability of commercial SAR satellite data; development of sophisticated processing and analysis tools; and industry-driven training initiatives to familiarize image analysts with SAR imagery, including its interpretation and utility.

Electro-Optical/SAR Comparisons

Electro-Optical/SAR Comparisons

Intuitively the colored imagery derived from electro-optical systems provides the human eye with familiar representations of Earth's surface that are instinctively easy to interpret. Additionally, electro-optical imagery has been known to the user community since the 1970s, so there's a lot of know how available.

Regardless, the user community is recognizing there's much more than meets the eye in black-and-white SAR data and imagery. The most obvious SAR advantage is the weather and daylight independence of radar systems, which ensure a guaranteed acquisition of the area of interest. This also enables consistent monitoring independent of lighting, weather or cloud-cover conditions.

This, however, is just one side of the coin. The real advantages of SAR unfold when the data are processed and analyzed appropriately to meet the mission. Many unique effects of SAR satellite data, such as radar shadow, can be exploited to extract information from the derived imagery that isn't detectable through visual interpretation alone.

For example, SAR imagery can be used to detect and even quantify the motion of objects on both land and sea. Thanks to the measurability of a SAR signal's intensity and phase, imagery analysts can determine elevation information and even subtle changes to surface conditions.

SAR Basics

Electro-optical systems are passive, which means they require the illumination of the sun for imaging. Radar, however, is an active remote sensing system, which means it provides its own energy source to illuminate the imaging area. A radar imaging system has three main functions: It transmits the microwave signal toward the scene, receives a portion of that transmitted energy as backscatter from the scene, and then observes the strength and time delay of the returned signal.

The energy of the radar pulse is scattered in all directions at the Earth's surface, with some reflected back to the antenna. The surface's roughness”i.e., the irregularity of the terrain vertically and horizontally”determines the return signal's amplitude. Surfaces can be classified as smooth, slightly rough, moderately rough or very rough.

Generally, bright areas in a SAR image are strong reflectors, such as buildings in urban areas, while dark parts of the image represent surfaces that reflect little or no energy, such as water surfaces or oil film on an ocean. Depending on the wavelength of the radar signal, SAR can penetrate forest canopy and Earth surfaces, detecting dielectric features such as metal objects, water, freeze/thaw, salt content, iron oxides and clay in soils. To fully exploit the advantages of SAR data and imagery, key mission-specific collection characteristics and parameters must be understood. The following sections clarify these parameters and characteristics.

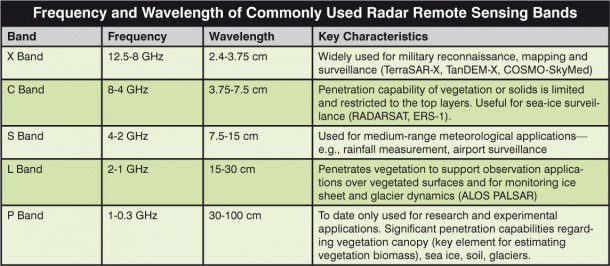

1. Wavelength

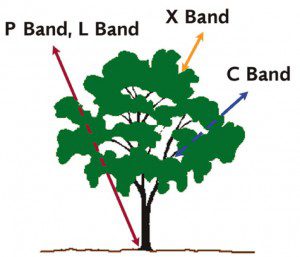

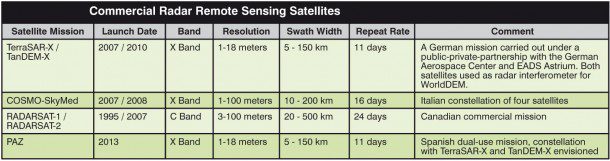

As detailed in the table below, radar remote sensing uses the microwave portion of the electromagnetic spectrum, from a frequency of 0.3 GHz to 300 GHz. Most radar satellites operate at wavelengths between 0.5 cm and 75 cm.

Shorter wavelengths”e.g., X-band imagery at 3 cm”are reflected from the top of the canopy, while longer wavelengths”e.g., L-band imagery at 24 cm”normally go down to the ground and are reflected from there. Using this characteristic of different wavelengths makes it possible to discern information about the canopy structure of a forested area from a multiwavelength image and thus estimate above-ground biomass.

Furthermore, the choice of wavelength needs to be matched to the size of the surface feature that should be distinguishable. Small features are best recognized with X-band imagery”i.e., short wavelengths”while large features, such as geology, are better marked in L-band imagery.

2. Polarization

Transmitted and received radar signals propagate in a certain plane”the polarization. The propagation planes are usually horizontal (H) and vertical (V). Vertically polarized waves will interact with the vertical stalks of plant canopy, while horizontally polarized waves will penetrate through plant canopy. Thus, the combination of the image channels into a red-green-blue (RGB) image results in a false-color image, which can differentiate ground cover such as vegetation classes.

The different combination options for the polarization will provide different image characteristics:

Single polarization. The radar system operates with the same polarization for transmitting and receiving the signal.

Cross polarization. A different polarization is used to transmit and receive the signal.

Dual polarization. The radar system operates with one polarization to transmit the signal and both polarizations simultaneously to receive the signal.

Quad polarization. H and V polarizations are used for alternate pulses to transmit the signal and with both simultaneously to receive the signal.

Multipolarized images are provided in the form of multiple layers, each corresponding to a different polarization channel. Each polarization channel is identified by two letters. The first letter denotes the transmit polarization, and the second refers to the receive polarization. Multipolarized SAR imagery allows users to measure the terrain's polarization properties and not simply the backscatter at a single polarization, thus providing improved classification information.

3. Mode

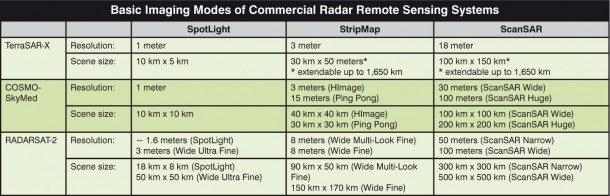

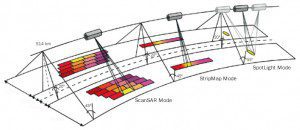

Acquisition mode is directly linked with the resolution of the resulting image and the size of the scene area covered.

As detailed in the table below, the acquisition mode is directly linked with the resolution of the resulting image and the size of the scene area covered.

The highest resolution is achieved with a SpotLight image. For this the radar beam continuously illuminates one terrain patch while the satellite is moving along its flight path. This sophisticated imaging mode makes it possible to acquire data with up to 1-meter resolution but restricts the scene size.

An image in StripMap mode is acquired by illuminating the ground swath with a continuous sequence of pulses while the antenna beam is pointed to a fixed angle in elevation and azimuth. This results in an image strip with constant image quality in azimuth. StripMap is the most commonly used acquisition mode, as it provides a good trade off between the size of the area covered and the resolution.

The ScanSAR mode overcomes the constraints of the narrow swath of the StripMap and is intended for use in applications requiring large area coverage such as monitoring applications. In this mode, electronic antenna elevation steering is used to acquire adjacent, slightly overlapping coverages with different incidence angles that are processed into one scene. For example, in the case of TerraSAR-X and RADARSAT-2, up to four single beams covering adjoining swaths are combined. Due to the switching between the beams, only bursts of SAR echoes are received, resulting in a reduced bandwidth and hence reduced azimuth resolution.

4. Incidence Angle

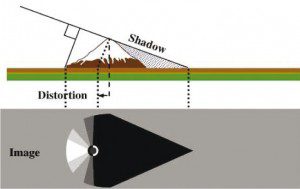

A slope away from the radar illumination with an angle that's steeper than the sensor depression angle provokes radar shadows.

The incidence angle refers to the angle between the straight to ground and the radar illumination. The interaction of microwaves with the ground is complex, and different reflections occur in different angular regions. Returns are normally strong at low incidence angles and decrease with increasing incidence angle.

Because of SAR's side-looking perspective, tall objects and relief structures are subject to displacements. There are three main radar effects, which must be taken into account when

using SAR data:

¢ Radar shadows are areas on the ground that aren't illuminated by the radar signal, thus no return signal is received, and these areas appear dark in the imagery. As the incidence angle of an image increases from near-range to far-range, shadowing becomes more prominent toward far-range. Shadowing in a radar image is an important key for terrain relief interpretation, as the height of an object can be derived from measuring the radar shadow. Thus, this apparently negative radar effect provides valuable information about a scene.

¢ Foreshortening describes the compression appearance of features that are tilted toward the radar. For a given slope, foreshortening effects are reduced with increasing incident angles. With this reduction of incidence angle, however, the shadow effect increases. Thus, the selection of the incidence angle is always a trade-off between the acquisition's occurrence of foreshortening and radar shadow.

¢ Layover occurs when the reflected signal from a feature's upper portion is received before the return from the feature's lower portion. In this case, the top of the feature will be displaced relative to its base. This effect is more prevalent for viewing geometries with smaller incident angles.

These effects can be compensated for only through a trade-off among them”if you don˜t have one, you will have the others. They can, however, support image analysis.

5. Repeat Frequency

Applications such as interferometry, surface movement monitoring or change detection require the acquisition of data stacks that have identical acquisition parameters (orbit, incidence angle and polarization). Analyzing changes in the amplitude and the phase of the return signal at the various acquisition dates provides information to derive small changes on the imaged surface. Not all changes, such as vegetation changes, are relevant for analysis, but the time difference among the acquisitions is important for interferometric processing.

Current commercial radar systems have 24- (RADARSAT-2), 16- (COSMO-SkyMed constellation of four satellites) and 11-day (TerraSAR-X and TanDEM-X) repeat cycles”i.e., they pass over the same point on the ground with the identical acquisition geometry in these time intervals.

In the case of TerraSAR-X and TanDEM-X, where the two satellites fly in a close formation with only a distance of a few hundred meters, they can acquire an interferometric data pair without any time difference. One satellite sends the signal, and both satellites record the backscatters simultaneously. This unique constellation makes it possible to perform high-quality interferometry all over the world without any limitations. The result of this unique mission will be Astrium's WorldDEM, a worldwide homogeneous digital elevation model. The global dataset will be available in 2014.

6. Resolution

A radar sensor's resolution has two dimensions: range resolution and azimuth resolution. The azimuth resolution is determined by built-in radar and processor constraints and depends on the length of the processed pulse, with shorter pulses resulting in higher resolution. Range resolution is determined by the angular beam width of the terrain strip illuminated by the radar beam.

A SAR image's resolution is influenced by the following parameters: wavelength, bandwidth, pulse repetition frequency (PRF), acquisition mode and incidence angle. The system parameters”wavelength, PRF and bandwidth”are defined by the system acquiring the data. A smaller wavelength and a higher bandwidth result in a higher resolution.

Resolution also is influenced by the acquisition mode. ScanSAR mode results in low resolution, StripMap mode offers medium resolution, and SpotLight mode acquires images with the highest available resolution.

In addition, resolution is influenced by an acquisition's incidence angle. A more shallow incidence angle”called far range, illuminating an area far away from the sensor”results in higher resolution. But the typical radar effects, such as layover and shadow, have to be considered when determining the right incidence angle to avoid restrictive impacts of these radar characteristics, particularly in areas with steep terrain.

7. Applications

The intended application, from feature extraction and change detection to ocean surveillance and elevation modeling, strongly influences the choices to be made about radar imagery acquisition. Choice of wavelength, incidence angle, acquisition mode and polarization have to be matched to the application.

8. Processing Level

As with most remote sensing data, different processing levels are available for SAR images. The right processing level has to be selected, depending on the application. The following basic processing options are available for SAR data:

Slant range data. These data are delivered in a complex data format in the sensor's geometry and include information about the received backscatter at the sensor and information about the phase of the traveled signal. Some processing”normally available in commercial products”has to be performed before viewing these datasets. Typically slant range data are used for scientific applications such as SAR interferometry.

Ground range data. After the sensor data are transformed to Earth's surface, a ground range product is produced as an image file. This product isn't georeferenced, but it can be input for orthorectification.

Geocoded and orthorectified data. Georeferenced SAR data also are available. For orthorectification, satellite orbit and height information from the ground are processed with the data. There are two options available as standard products: geocoding and orthorectification. Geocoding uses an average height of the acquired data. Orthorectification uses a DEM. An orthorectified dataset should be selected if an application requires high geolocation accuracy.

9. Location Accuracy

A SAR dataset's location accuracy is a result of the following parameters: orbit information precession, incidence angle and the accuracy of the input DEM for the orthorectification.

The orbit information's precession is the basis for a highly accurate, automatic orthorectification. If the orbit information isn't precise, the product's location accuracy can be optimized manually using ground control points (GCPs), but the result depends on the amount and quality of these data.

The best available orbit information should be used to retrieve the highest location accuracy. In the case of the TerraSAR-X system, the geolocational accuracy is higher than the system resolution, thus the system can be used to derive GCPs without any need for ground truthing.

The incidence angle and the input DEM's accuracy also influence location accuracy. The higher the DEM error and the steeper the incidence angle the higher the error in location accuracy. If high location accuracy is required”e.g., for mapping purposes”a precise DEM and a shallow incidence angle should be selected if available.

10. Pass Direction

Images can be recorded in either ascending or descending direction. It's important to consider the pass direction based on the surface characteristics and ground features present in the imaged area. Images acquired over the same area from both ascending and descending orbits can be merged to achieve the optimum look direction for features on the ground. Such image merges can be particularly useful in mountainous terrain, as typical radar artifacts, such as shadow or layover, can be overcome.

Editor's Note: A similar article on electro-optical satellite imagery purchasing criteria appeared in Earth Imaging Journal's May/June 2012 issue and is available online at http://eijournal.sensorsandsystems.com/2012/buying-optical-satellite-imagery.