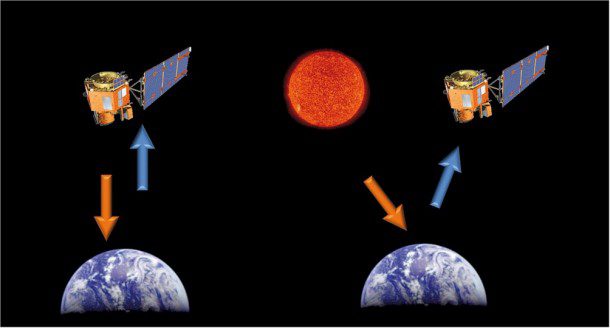

An active sensor (above left) is a radar instrument used to measure signals transmitted by the sensor that were reflected, refracted or scattered by Earth's surface or atmosphere. A passive sensor (above right) is a microwave instrument designed to receive and measure natural emissions produced by constituents of Earth's surface and atmosphere.

By Simon Hennig, application development manager, Astrium Services, GEO-Information (www.astrium-geo.com), Friedrichshafen, Germany.

A growing number of commercial Earth observation satellite platforms can be divided into two classes: active sensors and passive sensors. It's important to understand the difference between these systems to select the most appropriate type of imagery for your applications.

Passive sensors, often referred to as electro-optical or simply optical, acquire the reflected electromagnetic waves of the sunlight and/or the emitted infrared radiation by objects on the ground. Examples of such optical satellite systems include Landsat, SPOT, Pléiades, EROS, GeoEye and WorldView.

Active sensors, commonly referred to as synthetic aperture radar (SAR) or simply radar, provide their own energy to illuminate an area of interest and measure the reflected signal. Examples of active sensors are SAR satellites such as TerraSAR-X, TanDEM-X, Cosmo-SkyMed and RADARSAT.

Today's active and passive imaging systems can deliver images down to sub-meter resolution, and next-generation systems are expected to further advance image resolution and quality. Let's explore both systems in greater detail.

Characteristics of Passive Sensors

Optical satellite sensors require the sun's illumination for imaging. Depending on the system, passive sensors typically record electromagnetic waves in the range of visible (~430“720 nm) and near-infrared (~750“950 nm) light. Some systems, such as SPOT 5, also are designed to acquire images in the middle-infrared wavelength (1,580“1,750 nm).

Because sensors feature specific wavelength sensitivity, acquisitions with an optical instrument are separated into several images, depending on the mode. For multispectral sensors, such as SPOT 6 and 7 and Pléiades, it is common to separate image data into the three main spectral ranges”blue (~430“550 nm), green (~500“620 nm) and red (~590“710 nm)”as well as an additional sensor for near infrared (~750“940 nm). With the acquired dataset, the different wavelengths can be analyzed and used as input for further processing or easier feature classification.

For hyperspectral systems, such as EnMAP, sensors cover smaller spectral ranges, and the spectral range is extended into the infrared range to >2,000 nm. Acquisitions in panchromatic mode record the area of interest with one sensor covering the entire spectral range of the visible light and the near infrared (~470“830 nm). The panchromatic mode's resolution is higher than the multi/hyperspectral mode. The whole spectral range is covered, without separation among different wavelengths.

Because optical imaging is only possible with sun illumination, changes in the seasonal cycle have to be considered when planning acquisitions. Furthermore, cloud coverage can hamper image collection, as the sunlight is reflected by the clouds and recorded by the sensors. These two parameters limit Earth observation with passive sensors, particularly in polar areas with seasonal changes in sun illumination and the equatorial belt with persistent cloud coverage.

Optical systems can't bypass the cloud constraint. However, recent innovations with some satellite systems enable mission operators to more frequently update tasking plans to accommodate cloud-coverage forecasts. Compared with former optical systems, the resulting efficiency of cloud-free data collection is highly improved. For example, the ratio of images collected with less than 10 percent of clouds typically improves from less than 30 percent to about 60 percent.

In the past, optical satellite imaging was performed at nadir, meaning the sensor looked straight down from the platform to Earth”like the first Landsat satellites. SPOT 1's launch was a revolution in that respect. Equipped with a mirror technology that enabled collections left or right of the track, SPOT 1 was the first satellite able to acquire imagery of an area of interest at a specific time.

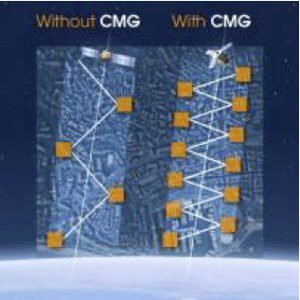

Now many optical satellites are maneuverable, with varying speed levels to move from one target to another. Standard satellites are equipped with momentum wheels, while the most agile satellites are equipped with control moment gyroscopes (CMGs) that enable a faster slew between two consecutive targets. This kind of performance increases the number of images that can be collected during the same pass, as collection opportunities are more numerous, conflicts among contiguous requests are minimized, and several targets can be acquired on the same pass at the same latitude.

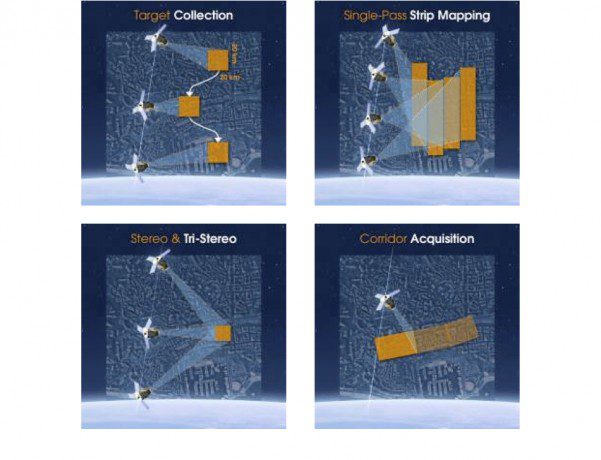

Advanced satellite agility offers operators different single-pass collection scenarios to meet users' needs.

Advanced agility also enables different collection scenarios to match user needs:

¢ Target collection to image multiple targets

¢ Strip mapping to create large mosaics in a single pass

¢ Stereo and tristereo acquisition for accurate 3-D applications

¢ Corridor acquisition for linear features such as coastlines, borders, pipelines, roads, etc.

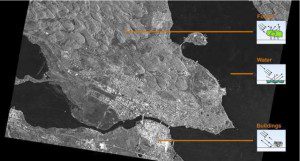

As illustrated by this Pléiades satellite image of Rotterdam, The Netherlands, optical data are easy to use for visual interpretation because the images represent Earth's surface the same way as the human eye views Earth.

Applying the same principle as photo cameras, optical satellites feature a compromise between coverage and resolution (the biggest is the zoom, the smallest is the field of view). When the satellite is equipped with multispectral bands (color), images in panchromatic mode offer four times the resolution than the multispectral mode. Current systems can deliver panchromatic acquisitions with submeter resolution and multispectral acquisitions with approximately 2-meter resolution.

A technique called pan-sharpening combines the visual information of the multispectral data with the spatial information of the panchromatic data, resulting in a higher-resolution color product equal to the panchromatic resolution. This image-fusion process combines multiple images into composite products to generate more information than the individual input images.

Optical data are easy to use for visual interpretation, as the images represent Earth's surface the same way as the human eye views Earth. This type of imagery has been available since the 1970s, and well-established processing algorithms are available for automated feature extraction and classification.

High-resolution imaging allows users to identify small objects and infrastructure features from space. Moreover, the reflection from different spectral bands can be used to classify land cover types and varieties in vegetation. Even vegetation's vitality can be recognized using near-infrared and infrared reflections.

Characteristics of Active Sensors

Active Earth observation satellites use electromagnetic waves in the frequency range of radar waves. Because the technique requires a large antenna to acquire high-resolution data from space, satellites operate synthetic aperture radar (SAR) systems with a side-looking geometry.

Currently there are three commercial SAR missions in space: Germany's TerraSAR-X and TanDEM-X (X band with ~3.5 cm wavelength), Italy's COSMO-SkyMed (X band with ~3.5 cm wavelength) and Canada's RADARSAT-2 (C band with ~6 cm wavelength).

A SAR antenna measures the backscatter and traveling time of the transmitted waves reflected by objects on the ground, varying from forest to water to buildings.

The most obvious advantage of SAR systems is their weather and daylight independence, as the active transmission of electromagnetic waves and the measurement of the reflected signal ensure a guaranteed acquisition of an area of interest. The SAR antenna measures the backscatter and traveling time of the transmitted waves reflected by objects on the ground. As a result, various parameters on the object side determine the imaging:

¢ Geometry: size and shape, orientation toward the sensor, roughness

¢ Dielectrics: water content, aggregate state, salt content, mineralogy

¢ Motion: in azimuth direction, in range direction

Furthermore, the characteristics of the acquired SAR image are influenced by characteristics of the SAR system used. Some of these features are determined by the specifications of the system while others can be influenced by the acquisition parameters. The parameters comprise repeat frequency, pulse repetition frequency, bandwidth, polarization, incidence angle, imaging mode and orbit direction.

Additionally, the resulting image is influenced by data processing. The right processing level depends on the intended application, and sometimes trade offs have to be made between noise reduction and resolution loss.

Due to a SAR system's side-looking geometry, there are three main special effects that have to be considered when using SAR data:

¢ Layover occurs when the reflected signal from a feature's upper portion is received before the return from the feature's lower portion. Thus, the top of the feature, such as a house or a mountain, will be replaced relative to its base in the resulting image.

¢ Foreshortening describes the effect when the length of a slope facing toward the sensor is shortened in the geometry while the traveling time of the signal between the top and bottom of the object is almost equal.

¢ Radar shadows are areas on the ground that aren't illuminated by the radar signal because they're hidden behind other objects like houses or mountains. Such areas don't have backscatter values in the resulting image and thus appear black.

Because of these effects, some objects can look different in SAR images acquired with a different incidence angle.

SAR data's resolution is influenced by the acquisition mode, wavelength, bandwidth and incidence angle. Longer illumination of an object and further distance toward the antenna results in higher resolution.

The highest resolution can be achieved with SpotLight mode, using a shallow incidence angle and a high bandwidth. Thus, in the case of the TerraSAR-X mission, the new Staring SpotLight Mode can achieve resolution down to 0.25 meters. Images in ScanSAR mode offer a much coarser resolution (> 10 meters) but cover a larger ground area. The StripMap mode offers a good trade-off between resolution (3 – 10 meters) and size of the area covered, so it is a commonly used SAR imaging mode.

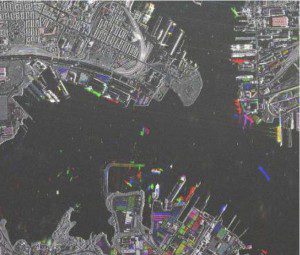

Amplitude change detection was used to reveal changes and activities in the Baltimore Harbor area over time.

Because a SAR system is an active system, it is possible to directly compare acquisitions with the same imaging parameters (mode, incidence angle, polarization and processing level). The backscatter doesn't change if there aren't any changes in the imaged area. Using an amplitude change detection process allows users to identify and analyze changed areas.

In addition to the intensity of the backscattered signal, phase information is available in a SAR signal for interferometric analysis. This technique can be used to identify even small changes in surface structures, such as tracks in the desert. Additionally, data stacks can be used for interferometric digital elevation modeling and surface movement calculations.

A unique way of acquiring elevation models using SAR satellites is under way with the TerraSAR-X and TanDEM-X satellite formation. The two satellites form a radar interferometer in space and acquire the database for the WorldDEM, a homogeneous global digital elevation model. Because the two satellites operate simultaneously, there are no decorrelations with the coherence, even in vegetated areas.

Overall, SAR data are a good alternative, yet complementary, to optical satellite data. Some background information and analysis experience is necessary for SAR data interpretation, but with the right processing and interpretation the data can deliver valuable and unique information that isn't detectable through visual interpretation alone.

Satellite Imagery-Based Applications

Although combining optical and radar satellite data is still somewhat limited in its use, the capability to fuse data from different sources, with varying resolutions and swaths, can bring significant benefits to many applications (see Fusing SAR and Optical Satellite Data, below).

First, an integrated use of optical and radar satellite data improves the ability to detect and identify objects, features or structures. Both sensors are sensitive regarding different surface characteristics. Thus, the ability to identify or discriminate among different objects is improved when both sources are used in an integrated way by analyzing both spectral signatures.

Second, combining optical and radar is useful when there's a need for frequent updates”for example, when highly dynamic processes need to be persistently monitored, such as construction or illegal cross-border activities. Depending on weather conditions and monitoring demands, different sensors can substitute for others, depending on access and cloud-cover constraints.

Third, combining different sensors is useful to reliably acquire either radar or optical imagery from an area of interest through frequent revisits. This is important for large mapping tasks. If image acquisitions are foreseen to be the base of a topographic mapping project, often a certain acquisition window is requested to guarantee the same surface status within the map to be generated.

A constellation of optical sensors improves the potential of cloud-free acquisition, thus minimizing the collection time and facilitating the observance of the acquisition window. Radar data supplement optical imagery in areas where cloud coverage is persistent and can guarantee data availability at a specific date.

Fourth, constellations offer the possibility to quickly acquire up-to-date imagery because of their high revisit frequency for any site on the globe, resulting in faster access to a given target.

Now the main challenge for increased use of fused datasets is ease of access to both optical and radar technologies. Such integration requires a single point of entrance for optical and radar satellite data as well as appropriate user support. Such integration can help users select the best satellite solution and its implementation as easily as possible based on the user's area, temporal constraints and issues/challenges that can arise.

Depending on the application, data from optical and synthetic aperture radar (SAR) sensors have specific advantages and disadvantages. For some applications one or the other is the best source, whereas for other tasks the complementary use of both provides the best result. The following examples of SAR and optical data fusion highlight the benefits of using fused data.

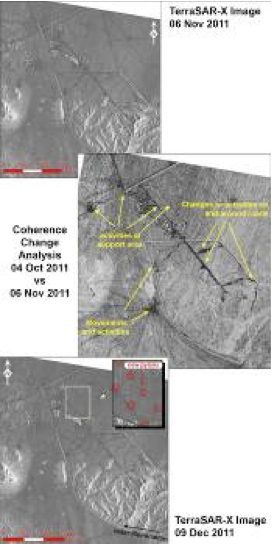

Site Monitoring, Nuclear Facility, Qom, Iran

A full integration of optical and radar satellite data can improve and speed up the monitoring task.

Radar can be used to automatically detect changed areas”even subtle changes on the surface, such as traffic on gravel roads, can be detected. Subsequently, optical imagery can be used to focus the detailed visual interpretation on identified areas of change.

Datasets from the high-resolution radar satellite TerraSAR-X and optical satellite Formosat-2, acquired consecutively between October 2010 and January 2011, were used to monitor activities around the

nuclear enrichment facility in Qom, Iran. Analysis of the TerraSAR-X data, using amplitude and coherence change detection, identified construction activities for pylons for a power line at an early stage. The radar imagery identified subtle earthwork changes related to the pylons' foundation that weren't yet detectable in the optical images.

Furthermore, a linear-shaped loss of coherence allowed analysts to identify heavy equipment movement among the pylons' foundations. By examining the amplitude change, analysts could identify the finally erected pylons due to their metallic structure.

This example clearly shows the benefit of a joint approach using radar and optical satellite data. Coherence derived from radar imagery allows analysts to detect construction work even in early stages”before anything can be detected in optical imagery.

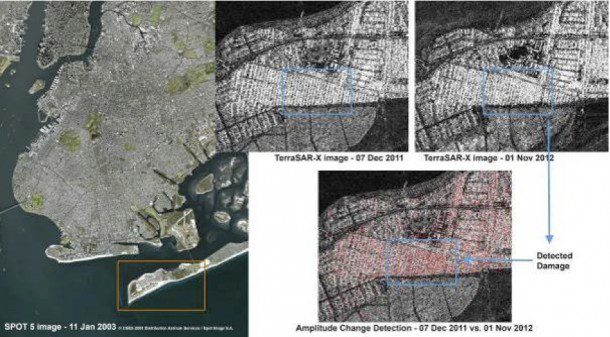

Hurricane Sandy, Long Island, N.Y.

On Oct. 29, 2012, Hurricane Sandy hit the New York City area, causing considerable damage on Long Island. Houses were destroyed, and fires wasted entire housing blocks.

The severe weather and dense cloud cover often associated with natural disasters can hamper the collection of optical data for damage detection. In this case, SAR data of the area provided timely information and input for damage assessment immediately after the event. Combining pre- and post-event SAR data allowed analysts to rapidly perform change-detection mapping.

In the case of Hurricane Sandy, a TerraSAR-X image, acquired Dec. 7, 2011, was available in the archive, and an image was acquired right after the event on Nov. 1, 2012. Applying amplitude change detection techniques to the two datasets revealed the heavily affected areas on Long Island. Overlaying this information onto an archive optical image allowed analysts to recognize buildings and infrastructure objects for reliable damage assessment and targeted crisis response management.