By Rob Mott, vice president of Geospatial Solutions, Intergraph Government Solutions (www.intergraphgovsolutions.com), Madison, Ala.

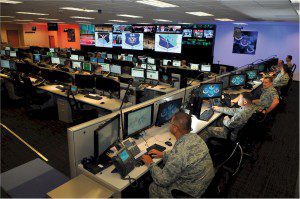

Modern command operation centers harness myriad sensor feeds and fuse them into a meaningful context.

From early Native Americans tracking a herd of buffalo across the great plains of the Midwest to an army of soldiers in medieval Europe warding off attacks to their castle, humans have been collecting information about the environment and events through their senses, performing real-time analysis on observed patterns of activity, and then planning and collaborating to execute a specific mission. Sights, sounds, smells and physical touch all trigger real-time sensory inputs that are simultaneously processed by the brain to create a cohesive representation of our environment. So, in a sense, the human brain has done an excellent job at multi-intelligence (Multi-INT) fusion for centuries.

In today's modern military and intelligence environment, the individual's senses have been replaced in large part by sensors. However, the brain's innate ability to process various stimuli hasn't evolved much for thousands of years. Because we operate in an environment where sensors and data collection systems spew a wider variety and larger volume of information at us more quickly than ever before, we need to create an environment in which an analyst's ability to make assessments and decisions continually improve. The modern warfighter and the analysts that support such missions must rely on tools and techniques that harness these modern sensor feeds and fuse them into a meaningful context to achieve the desired results.

What Is Activity-Based Intelligence?

With the ultimate goal of improving intelligence superiority over our adversaries in mind, the U.S. intelligence community has developed a new paradigm called activity-based intelligence (ABI). ABI is an exciting advancement that's driving improvements in sensor development, information management, and data analysis and sharing. Rather than studying a target to understand and assess details of an object or area of interest, ABI's goal is to study changes in various data feeds from multiple sources over a designated time period. This approach indicates life patterns and helps to understand trends as indicators of future activities.

National Geospatial-Intelligence Agency (NGA) Director Letitia Long defines NGA's role with respect to ABI as focusing on what might happen next.

We're moving into more of an anticipatory mode, she says. We bring as many pieces of information as we can by using [Multi-INT] fusion and nontraditional sources.

How Does ABI Work?

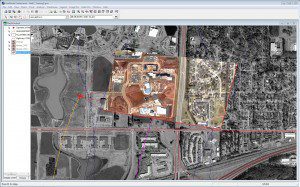

Displaying real-time unmanned aircraft system video feeds and other geospatial data over a satellite image improves context and understanding of unfolding activities in an area of interest.

Multi-INT fusion is an important component of ABI. The ability to bring imagery intelligence (IMINT), signals intelligence (SIGINT), human intelligence (HUMINT), measurements and signatures (MASINT) and other sources into an integrated analytical environment provides a powerful setting that can help an analyst detect trends he or she might not otherwise see.

Feeds from one intelligence source can provide cues to specific data segments from other sources, leading to more meaningful analysis. A wide array of sensors”optical, laser, radar, acoustic, seismic and others”are used to collect as much detail about the environment as possible.

Although each type of sensor is powerful on its own, the power is magnified when information from multiple sensors is combined in one analytical environment. Together, these feeds combine to create a powerful and detailed depiction of the real-world environment.

Different sensor types are well suited for certain environments, but not all. For example, optical sensors are well suited to collect high-resolution images during the day, but not at night. And light detection and ranging (LiDAR) collections can occur at night, but will not perform well in cloudy and rainy conditions. Radar can collect at night and in cloudy conditions, but may not provide as high a resolution as other sensor types. The best solution is for analysts to work in an environment that supports LiDAR, radar and optical collections as well as multispectral and hyperspectral sources. Satellite imagery may serve as the canvas on which other dynamic data feeds are displayed.

However, ABI is much more than just Multi-INT analysis. A second key ABI focus is the ability to understand events and transactions, both of which have a time element associated with them. This aspect may include collecting dynamic information, such as unmanned aircraft system video feeds, or it may also look at changes in time with respect to a series of individual data collections”a series of still images taken over a period of time played back in a flipbook fashion.

Understanding the relationships among different sets of intelligence captured from different sources based on common time stamps is crucial for ABI analysis. As equally important as a common geospatial reference, time-stamped metadata associated with sensor collections are key for establishing the relationships among those collections and for interpreting additional details about unfolding events.

Understanding Temporal Analysis

Change detection is another crucial aspect of an ABI workflow, because it helps to determine changes in an environment over time. A common type of change detection involves comparing two images over the same area of interest from two different points in time and using computer algorithms to automatically detect changed pixels. This can apply to a range of data types. For example, comparisons between two images may yield some indicators of the pace of construction of a facility or the speed of a convoy traversing the landscape.

However, change detection isn't limited to imagery, and it certainly isn't limited to 2-D data. Comparing two 3-D LiDAR datasets can provide a powerful visual depiction of specific changes taking place in an environment. This can be essential when planning for an urban mission, such as looking at changes to a viewshed due to recent construction activity in a part of a city.

In addition, change detection of two LiDAR datasets can show specific changes in elevation that may also indicate excavation. However, with the sheer volume of imagery on the rise, computer-assisted change detection is necessary.

Change detection is a complex process that requires rigorous image registration to maximize the accuracy of the results. This process establishes coincident georeferenced points across multiple images so pixels in the image can be properly aligned. The process is necessary because images that are taken at different times often are collected from different angles or orientations, times of day, time of year, etc. Shadows and glare need to be accounted for, as they can dramatically affect the quality of change-detection processes.

One technique that can be applied to the change-detection process to improve accuracy is to remove atmospheric effects. Typical change-detection algorithms are run from desktop-based applications, but modern improvements now allow analysts to package the algorithms and publish them as a Web processing service. Such a service can be accessed remotely by a variety of other users and systems, including those with handheld devices such as tablets and smart phones.

Better Tools Improve Analysis

A comparison of two images shows how automatic dehazing improves the quality and usability of imagery, allowing an analyst to make better decisions.

ABI solutions are addressing some of the most challenging aspects of the military and intelligence mission set. Because many of the applications relate to processing initial video and imagery feeds to generate derivative products or automate analysis, the quality of those incoming feeds directly affects the quality of results.

ABI provides an increased focus on analyzing dynamic activities in real time, so the speed at which analysis must occur and decisions are made also must increase to ensure timely responses. Seconds and minutes are crucial.

In addition, ABI solutions must establish real-time connections to as many raw intelligence collections as possible. Ensuring the usability of these raw data can dramatically increase their value in such a process.

An ABI solution designed to automatically track moving ground vehicles from an airborne video source may have difficulty achieving satisfactory results if the video feed is grainy, obscured by clouds or smoke, or is unstable and jittery. Applying technologies such as Intergraph's Video Analyst software to an ABI workflow will allow an analyst to apply state-of-the-art video filters and sophisticated algorithms to the incoming streams and perform real-time corrections.

Such software includes specialty techniques such as dehazing, stabilization and gamma correction while allowing an operator to fine tune parameters and thresholds to optimize the resulting output. These corrected video feeds then can be processed by object tracking modules and other applications. Transforming poor-quality, sometimes unusable video to higher quality information-rich streams allows analysts to improve the effectiveness of ABI solutions.

Also key to ABI is recognizing that other open-source information can be key indicators to behavior patterns. Tapping into the vast area of social media, including crowd-sourced information, is critical. Social media is dynamic and pervasive and can provide valuable insight into understanding unfolding events. Key word searches, geolocations of twitter feeds, and time-stamped place references, pictures and video all provide a rich information source.

Adapting to the Art of Tradecraft

Tradecraft takes over where technology leaves off. ABI is a new tradecraft”a new way to approach the problem. Technology must adapt to this tradecraft, not the other way around. Geospatial technology providers must develop solutions that are malleable enough to conform to ABI's evolving demands.

Analysts must have the flexibility to mix and match products from many different vendors in whatever manner they choose to best solve a particular problem. Building technology that conforms to open standards is a critical part of achieving this goal, as such standards allow for many organizations, companies and individuals to contribute.

The more flexible and adaptable vendors make standards-based technology offerings, the more open those technologies will be to innovative mash-ups, thereby improving the ability to answer tough questions and solve tough problems. Without this adaptability in mind when developing technology, vendors limit the creative process.

ABI solutions must allow analysts to work with more dexterity than ever before. Introducing new interfaces for interacting with traditional systems may allow users to manipulate various datasets in new and interesting ways. For example, introducing an X-Box style game controller as an interface to an image analysis application may allow an analyst to zoom and roam more intuitively, tapping into greater levels of efficiency.

Enhancing the Human Element

ABI is equal parts technology and tradecraft. However, as advanced as these modern systems are becoming, they don't replace the human in the loop”at least not yet.

Although ABI technologies can't predict the future or read minds, they can provide innovative ways to effectively combine multiple sources of intelligence and geospatial information and address the importance of the time domain. ABI provides analysts with improved capabilities that allow them to better understand behavior patterns and make better decisions.

The ability to make accurate assessments and predictions is still a human-based activity. As a result, it's more appropriate to classify ABI systems as ways to enable humans to better predict future behaviors and actions and to better assess driving forces and motivating factors. It's clear that the exciting, emerging ABI paradigm, with an equal focus on technology and tradecraft, can create a significant intelligence advantage.