The emergence of cloud computing in government is reducing geospatial data costs and improving user access.

By James S. Blundell, Mike West, Betty Davlin, and Brandon Johnson, Geospatial Products and Solutions, Overwatch Systems (www.overwatch.com), Sterling, Va.

The emergence of cloud computing as a new paradigm for supporting the government's goals of reduced costs and improved user access is driving new approaches in cataloging geospatial data.

The emergence of cloud computing as a new paradigm for supporting the government's goals of reduced costs and improved user access is driving new approaches in cataloging geospatial data.

Experience has shown that geospatial data collection assets will continue to outperform analysts' abilities to process and exploit information in a timely manner. For example, DigitalGlobe's constellation of imaging satellites”QuickBird, WorldView-1 and WorldView-2”together collect approximately 1.5 million kilometers of high-resolution imagery every day. This phenomenon is only increasing with the addition of full-motion video (FMV) and wide-area surveillance (WAS) collection platforms (see Motion Video Exploitation, above), and it's especially relevant for time-critical exploitation of imagery and FMV data supporting strategic and tactical intelligence missions.

To address the issue, the intelligence community continually refines and improves its enterprise strategies for data storage and management, including designing large data stores to provide ubiquitous and cost-effective access to a wide range of data products such as reports, maps and images.

Despite the challenges, emerging strategies and approaches are opening a wide range of benefits associated with cataloging geospatial data in the cloud for enterprise data storage and management.

Computational Strategies

Cloud computing provides access to Map Reduce (MR), Bulk Synchronous Parallelization (BSP) and Language Integrated Query (LINQ) for High-Performance Computing (HPC) strategies. For example, the inherent parallelization of the cloud improves the processing power available to prepare multiple image tiles, adjusting output based on a user's current location and recorded predilections. Cloud processors can simultaneously be fetching and sending the next set of imagery tiles to the client for caching, preparing man-made infrastructure overlays for ready access upon request and generating lower resolution tile sets to fill gaps in case communication bandwidth suddenly narrows during roaming.

Products such as Microsoft LINQ for HPC can assist in determining the best strategy to structure, compute and deliver a user's data request. While similar to a typical Structured Query Language (SQL) request, these technologies bring massive scaling to an equivalent request”across multiple processors and data caches. Such strategies also allow for a more visible injection of program logic. So while an SQL request might not have performed pixel colorization as a user-defined function, such colorization might be entirely natural and easily invoked during the reduce phase of a map-reduce operation.

The cloud also supports replication and database sharing for reliable, scalable and distributed tile, data and metadata servers. By using NoSQL data stores, such as HBase, Cassandra, MongoDB and CouchDB, the cloud can provide high availability for data and metadata. The myriad ways of managing data in such stores helps ensure optimal query strategies.

For example, Hbase”or even Hadoop's HDFS file system on which Hbase sits”is designed for accessing massive amounts of write-occasionally and read-frequently data, e.g., map tiles. Cassandra's columnar stores are equally at home supporting Resource Description Framework (RDF) data or hierarchically oriented metadata. MongoDB's document store supports rapid record updates without entire record rewrites. Other technologies, such as Lucene/Solr, further assist in the query process by distributing index contents across the cloud.

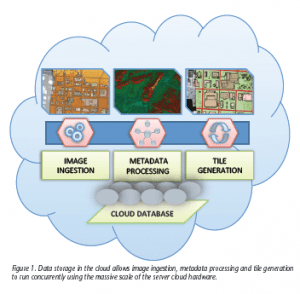

Overwatch's expertise in handling image data has transitioned into expertise in cataloging and database management. For example, the company's GeoCatalog solution provides scalable data management and cataloging capabilities, enabling users to organize, search and retrieve geospatial data while offering configurable schemas, queries, templates and result content (Figure 1). Its scalability supports disconnected laptops, small work groups or enterprise users, as well as a wide variety of data and data sets, including the U.S. government's Data Point Positioning Database (DPPDB) product.

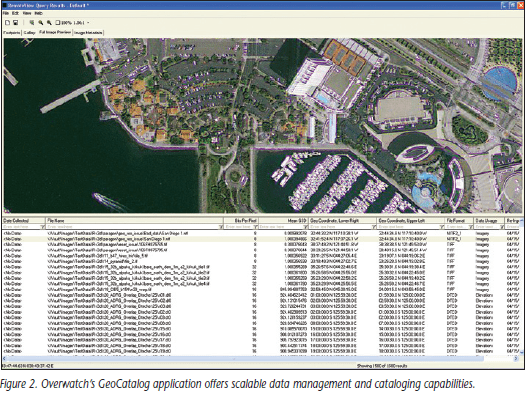

GeoCatalog has been integrated within imagery analysis tools, such as RemoteView and ELT. GeoCatalog includes a taxonomy or schema definition language (Figure 2) that can be used to generate Data Definition Language (DDL) artifacts that support both SQL Server and Oracle. GeoCatalog offers a wide array of capabilities that allow analysts to quickly and efficiently locate and manage relevant geospatial data and streamline workflow.

GeoCatalog has been integrated within imagery analysis tools, such as RemoteView and ELT. GeoCatalog includes a taxonomy or schema definition language (Figure 2) that can be used to generate Data Definition Language (DDL) artifacts that support both SQL Server and Oracle. GeoCatalog offers a wide array of capabilities that allow analysts to quickly and efficiently locate and manage relevant geospatial data and streamline workflow.

There are several adapters that provide federated access to additional data sources and legacy data standard request technologies using Hypertext Transfer Protocol (HTTP) requests and Open Database Connectivity (ODBC). These plug-in adapters can be extended to include Open Geospatial Consortium (OGC) and other standard interfaces.

The Integrated Exploitation Capability (IEC) buffer adapter is a client-side adapter that can be easily adjusted on-site through Extensible Markup Language (XML)-based configuration files. The GeoCatalog DataMaster Adaptor has a client-side component like the IEC query adapter that communicates with a server-side component on a DataMaster Solaris server. These adapters execute a single query using a common user interface and display the combined results.

The Integrated Exploitation Capability (IEC) buffer adapter is a client-side adapter that can be easily adjusted on-site through Extensible Markup Language (XML)-based configuration files. The GeoCatalog DataMaster Adaptor has a client-side component like the IEC query adapter that communicates with a server-side component on a DataMaster Solaris server. These adapters execute a single query using a common user interface and display the combined results.

Bringing Cataloging to the Cloud

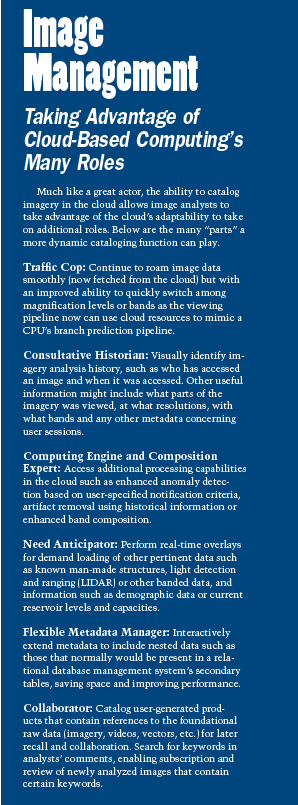

The migration of cataloging imagery to the cloud provides opportunities to enhance existing features and add new capabilities. Traditional cataloging relies upon SQL-based metadata stores directed toward defining, creating and maintaining metadata associated with imagery and cartographic products. By operating in the cloud, cataloging now can expand into new territory and take center stage as needed (see Image Management: Taking Advantage of Cloud-Based Computing's Many Roles, page 39.

To make this vision a reality, OverWatch is merging GeoCatalog's capabilities with the flexibility of Cloudwave, which offers Web-based data exploration, query and presentation widgets. A Cloudwave-based GeoCatalog promises enhanced Web visualization of metadata, including interactive taxonomy, change history and link charts that tie analysts' data and work products together. The future holds great promise as remote sensing experts further exploit the possibilities associated with cataloging geospatial data for the cloud and delivering greater value to analysts who want and need such functionality.