Sensor fusion advancements are being used to develop and enhance innovative coastal applications at the Joint Airborne LiDAR Bathymetry Technical Center of Expertise (JALBTCX). Established in 1998 through a partnership with the U.S. Army Corps of Engineers (USACE), the U.S. Naval Oceanographic Office (NAVO) and the National Oceanic and Atmospheric Administration (NOAA), the center provides coastal mapping and charting support to meet operational requirements of the Army and Navy. In addition, the partnership performs research and development to advance airborne light detection and ranging (LiDAR) bathymetry and complementary technologies.

The partnership led the development of the Scanning Hydrographic Operational Airborne Lidar Survey (SHOALS) system, owned by USACE. The system provided survey data between 1994 and 2003 and successfully demonstrated the ability to deliver fast, accurate hydrographic surveys of inlets, navigation channels and shore protection projects, further supporting numerical model development, nautical charting, structure conditions, sediment processes and disaster response.

As the capabilities and technology advanced, the Compact Hydrographic Airborne Rapid Total Survey (CHARTS) system was developed and began survey operations in 2003 (see CHARTS and Mapping Products, page 34). NAVO uses the system in overseas nautical charting missions and shares the system with USACE, which uses CHARTS and similar contract survey capability to support its National Coastal Mapping Program (NCMP).

In 2004, the center moved to its current location in Kiln, Miss., and the NCMP was established, collecting airborne LiDAR and imagery data for more than 15,000 kilometers of shoreline along the Gulf, Atlantic and Pacific Coasts, and Great Lakes, where the system will return in 2011 and 2012. Survey efforts continue to collect high-resolution bathymetric and topographic LiDAR elevation data and hyperspectral and aerial imagery along a 1-mile swath of the coastal United States on a recurring schedule, providing data necessary for regional sediment management, navigation, environmental restoration, regulatory enforcement, asset management and emergency response activities in the coastal zone.

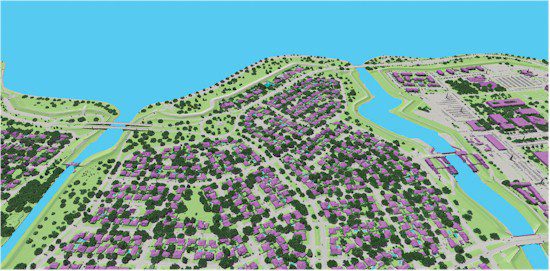

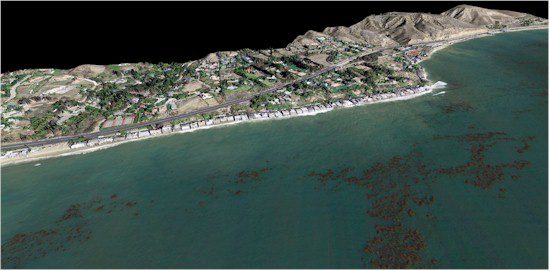

Figure 1. A view along the south shore of Lake Pontchartrain, La., in 2009 illustrates land cover and physical coastal features identified using an image fusion classification approach.

Image Fusion for Coastal Applications

In addition to the continued development of standard NCMP mapping products, the center works to evolve airborne technology through research and development efforts that fuse LiDAR elevation data and hyperspectral imagery to support physical and environmental characterization, as well as coastal engineering applications. Current examples of image fusion illustrate distinct advantages over single sensor analyses, such as improved classification accuracies and feature extraction. Although image fusion isn't new to remote sensing, integrated airborne sensor suites present improvements, such as minimal co-registration errors and temporal disparities that can be common when combining datasets. Fusing hyperspectral imagery with LiDAR elevation data specifically results in the combination of detailed structural information from the LiDAR data (active) with detailed spectral information from the hyperspectral imagery (passive), resulting in new or enhanced ways to target or characterize complex landscape features and examine detailed spatial relationships.

Continued image fusion activities at the center include the use of hyperspectral imagery and topographic LiDAR elevation data to classify basic land cover types, using a decision tree classifier or a system of user-defined equations, and segment and identify various pixels based on spectral and elevation characteristics. The land cover classification result is useful for coastal environmental projects that need quantified physical characteristics or landscape changes to be monitored over time. For example, temporal analysis, using hyperspectral and LiDAR fusion, is helping researchers characterize thematic and structural changes and recovery patterns along the south shore of Lake Pontchartrain, La., following Hurricane Katrina ( Figure 1).

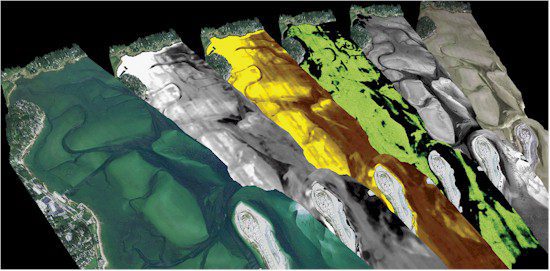

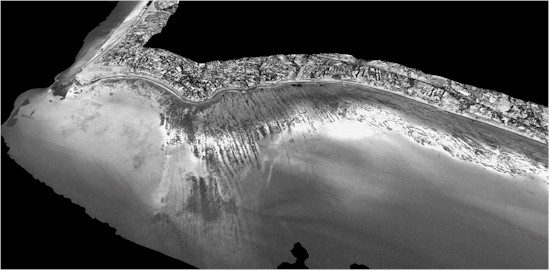

Figure 2. An image stack shows (from left to right) water leaving reflectance, water column attenuation, CDOM absorption, chlorophyll concentration, active seafloor reflectance and spectral seafloor reflectance at Plymouth Harbor, Mass.

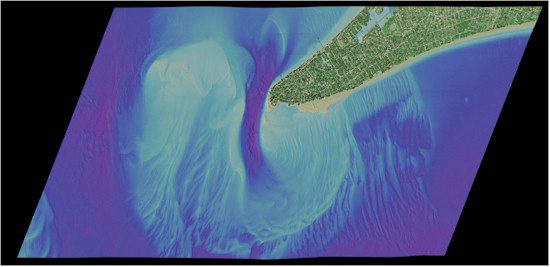

Another development area is the use of bathymetric LiDAR data and hyperspectral imagery to characterize the seafloor and identify bottom (benthic) types, as well as to characterize water column properties.

In partnership with Optech International, the center uses image fusion for a diverse range of environmental projects, from characterizing eroding toxic sands to discriminating submersed aquatic vegetation (SAV) species and sensitive coral reefs.

In general, algorithms use the bathymetric LiDAR data to help correct hyperspectral imagery from which water depth, water column constituents, and benthic seafloor reflectance are derived. As a result, scientists can generate additional information about the water column and seafloor. For example, seafloor reflectance can be used to classify bottom types (i.e. sand, mud, SAV, etc.), such as in Plymouth Harbor, Mass., where various bottom types and different SAV species are identified using water-leaving reflectance images in combination with other derived imagery (Figure 2). Such data are useful for a wide variety of coastal planning activities, ranging from minimizing impacts of USACE dredge maintenance to sensitive ecological resources to prioritizing local SAV restoration efforts.

New and ongoing research uses image fusion techniques to enhance coastal land cover classification for identifying thematic and geomorphic features. Building on current classification methods developed at the center, a new approach is being explored for more detailed analysis of natural and physical features of interest, such as vegetation, coastal features, coastal structures and buildings. A higher level of detail within a hierarchical class structure offers basic land cover types (as identified in the current approach), as well as the capability to identify more detailed features and structural components.

A detailed coastal classification schema built on a fusion approach is valuable to a wide audience, including coastal scientists, planners, and engineers who want to use the latest information to better manage and monitor critical coastal regions. Ultimately, the classification will be available for the coastal United States through data provided by the NCMP, providing the opportunity to conduct coastal change detection analyses.

Regarding coastal engineering applications, image fusion offers new insight into engineering projects, shore protection and navigation activities. The fused data can help to identify geomorphic features, such as dunes and bluffs, by distinguishing these geomorphologic features from buildings and other structures with similar elevation derivatives. The fused data also can be used to show the presence and types of vegetation that can be used to determine stability.

Additionally, the fused data can enhance condition assessment of inlet-stabilizing structures, such as jetties, by combining components of a visual inspection through high-resolution imagery with an engineering survey through LiDAR elevation data. Delineating inlet features, such as navigation channel and ebb shoal, allows engineers to quantify shoaling and migration, which can compromise safe navigation through the inlet. Also, coastal flood protection structures, such as levees, can be assessed using the fused data to identify key structural components and impinging vegetation species.

Environmental applications include image fusion techniques for landscape-based mapping approaches of invasive species detection and habitat identification, which could ultimately aid in environmental management strategies and prioritization of future treatment scenarios. Wetlands and beach characterization is another development area, including the use of hyperspectral imagery to map sand types coupled with the LiDAR elevation data to track erosion and accretion rates to identify unique sand transport patterns and changes. In addition, image fusion is useful for detailed wetlands mapping.

LiDAR elevation data are used to examine species patterns, zonation and suitability relative to elevation gradients; hyperspectral imagery is used to detect individual species or habitat assemblages. Wetlands often indicate environmental quality, and the need to identify critical species is important for monitoring coastal health and planning wetland restoration activities.

Improving the Process

To keep pace with mapping complex coastal environments, JALBTCX partnered with Optech International and the University of Southern Mississippi to develop the next-generation airborne coastal mapping and charting system: the Coastal Zone Mapping and Imaging Lidar (CZMIL) system. Scheduled for deployment later this year, the system is designed for high performance in the near-shore environment. CZMIL is expected to enhance image fusion and 3-D environmental data of the beach, water column and seafloor.

Primary improvements include simultaneous topographic/bathymetric survey capability; increased spatial resolution and accuracy of the LiDAR point cloud, water column properties and bottom characterization; better target detection performance; improved depth measurement capability over a wider range of water turbidity conditions and in shallow water environments; reduced processing time; and improved characterization of the seafloor, beach and water column. New systems like CZMIL and the continued evolution of image fusion processing for coastal environmental and engineering applications will provide advanced airborne image and LiDAR elevation solutions to meet the requirements of USACE and JALBTCX partners, as well as the broader coastal scientific community.