By Brian Rohde, senior product manager, DigitalGlobe (www.digitalglobe.com), Longmont, Colo.

As the use of satellite and aerial imagery continues to expand across a host of applications, the mechanisms to deliver and distribute such data have become critically important to users and providers. Aligning these dissemination tools with cloud hosting and computing is a natural fit that can make remote sensing data more accessible to users.

Improving Data Timeliness

Remotely sensed data are used increasingly for a variety of purposes, including defense and intelligence, humanitarian and disaster relief, location-based services and environmental monitoring. Because of the unique benefits remotely sensed data bring to each of these applications, government and commercial demand for imagery products has increased significantly during the last decade. This increase has led to related technology investments by government and organizational users as well as commercial data providers.

Satellite imagery users now can monitor any place in the world from the convenience of their office or home. This significantly improves overall efficiencies by reducing or eliminating the need for regular on-site visits, often allowing users to access content within hours of its acquisition. From defense and intelligence to commercial interests, this timeliness facilitates rapid and informed decision making.

To appreciate the advantages of such efficiencies, imagine the scenario of a world without the Internet. In this scenario, the volcano Mount Pinatubo, located in the Philippines, suddenly erupts. Within minutes, a satellite imagery provider tasks its constellation and, within the hour, collects the first images of the volcanic event.

Having received multiple orders from humanitarian and relief organizations, the company produces the images, burns them to DVDs and sends them that afternoon to Manila via an air courier service. The DVD is held in Tokyo because all flights to Manila are canceled due to poor visibility caused by an ash cloud pervading much of the Philippines. Two days later, humanitarian relief organizations receive the DVD, which finally was delivered from Tokyo to Manila by ship.

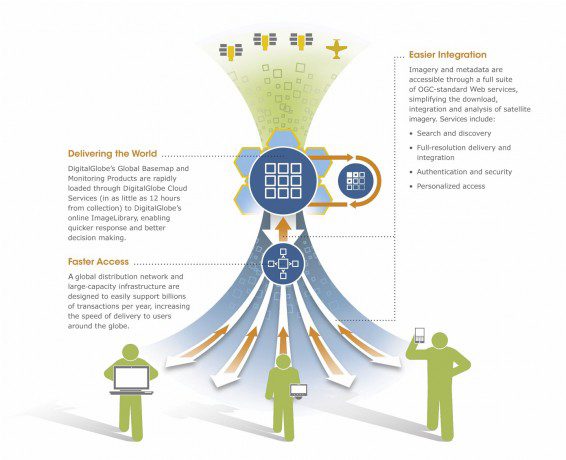

With an extensive suite of OGC-compatible Web services combined with powerful hosting infrastructure, DigitalGlobe Cloud Services users gain access to current, high-resolution imagery and geospatial information from desktops, portals, intranets and mobile devices around the world.

Fortunately, we no longer live in such a world. As was the case with the recent tsunami and subsequent nuclear disaster in Japan in 2011, sharing and disseminating information occurred within minutes of the disaster. In this real-world example, Digital-Globe imagery of the Fukushima Daiichi plant collected just minutes before and after the first explosion was made available online within hours of the event. The content then was available to emergency responders and decision makers on demand.

Enabling Effective Distribution

To ensure effective access to content when and where users need it, satellite imagery providers such as DigitalGlobe have used the Web and cloud-based services to deliver such content globally. These new distribution frameworks provide dramatic reductions for customers' total cost of ownership. Now, instead of investing in a data center or waiting for physical media to be delivered”then struggling with how to share data among users who may not be in physical proximity”users can simply make a request to a cloud-based Web service, which will respond with the image product within seconds.

Laying the foundation of DigitalGlobe's cloud-based remote sensing Web service are Open Geospatial Consortium (OGC) open standards. Only by having a well-adopted, uniform set of services and protocols can users expect to integrate services to existing geospatial applications and address customer integrations en masse. Indeed, open standards allow for a single Web service to be natively interoperable with hundreds of geospatial applications. Web services can be hosted in industry-standard cloud platforms directly, or they can be Image as a Service solutions in which pixels are hosted on the cloud and referenced by Web services at the customer's or provider's environment.

By making the imagery product once and disseminating it through cloud-based Web services, providers can leverage the cloud to fulfill multiple customer commitments at once. Cloud computing allows imagery to be processed in real time, exactly in accordance with each customer's request. Image customization, including formatting, mosaicking, projection and layering, can be completed in seconds.

Understanding Common Cloud Practices

What does it mean to store imagery in the cloud? In this context, there are two primary approaches to using the cloud.

The most common use is to store individual images and their metadata in the cloud. This is similar to the commonplace practice of most Content Delivery Networks (CDNs)”each image is simply a file. Any requesting application can reference the requested file, which is returned by the CDN. These files usually are replicated at multiple nodes or sites around the world, thus improving data availability and reliability as well as response times for users who are in bandwidth-disadvantaged regions. This approach of replicating the files to a multitude of locations can enable response times of less than one second and data and system availability approaching 100 percent.

The end-user application can access any of these files by passing appropriate credentials along with the request for the file. The data's owner can manage access to this content on a granular level. For example, while a global set of content can be available on the cloud, one user might be allowed to access certain content only of Mexico while another is able to access all content globally. This allows content providers to manage and license their data as carefully as if the content were being delivered via physical media.

A second approach to cloud use includes image-processing capabilities. In this scenario, the images and metadata are again stored in the cloud. Additionally, computing resources are made available in the cloud, usually in close proximity to the data to reduce latency (time delay). Those resources can be part of a vendor's own data center or purchased from many of the commercially available cloud providers.

DigitalGlobe Cloud Services users can securely monitor any place in the world from the convenience of their home or office.

By adding computing capabilities, the files can be processed per the user's request, including capabilities such as reformatting, reprojection, custom image selection and algorithm processing. This addition to the image files offers users a robust set of features and capabilities that also ensure high availability and low latency. For the vendor, the use of elastic computer resources, which can be purchased in tiers or based on actual usage, helps address significant usage increases without any performance degradation.

Improving Data Security

Just as the banking industry has leveraged technologies and practices to make online access secure, so too have remote sensing providers. Several methods are available to ensure data security and privacy, and remote sensing providers often implement some or all of these best practices. By implementing rigorous authentication and authorization procedures, vendors can be assured that only licensed users can access content. This is done through dynamic credentials, time-limited application tokens, public-key infrastructure, system monitoring and alerting and more. By encrypting requests and responses via a Secure Sockets Layer, customers and vendors alike can be assured that their activities, information and imagery are secure.

Security can be hardened even further through the use of private clouds. Such clouds are built for specific departments and users. In addition to the standard cloud-based security mechanisms mentioned above, these private clouds can limit access to only certain networks and regions and can include custom authentication mechanisms.

Expanding Cost Benefits

Once considered prohibitive due to imagery's large data files, the costs of storing geospatial content replicated across multiple sites continue to decrease exponentially. The progressively decreasing cost of storage and computing resources now means that terabytes and petabytes of data can be replicated across multiple sites for a fraction of the cost compared with five years ago.

Considering continually decreasing costs alongside the sundry benefits to data consumers and providers, storing and processing imagery in the cloud never has been more attractive. Cloud services are transforming the remote sensing industry as they continue to evolve together. The advantages to geospatial data consumers and providers are significant and ensure a continued focus on the geospatial cloud's evolution.