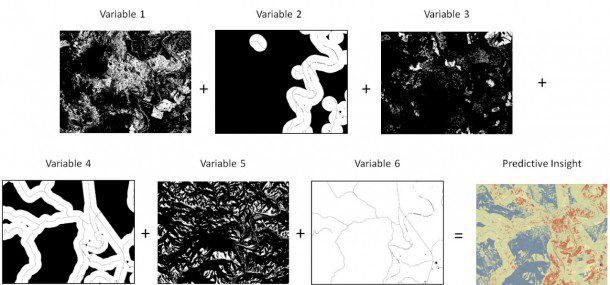

Tremendous value and insights are achieved when all available data”raster, vector, numerical data and even subjective cultural aspects”are fused together into models that can identify spatial patterns.

By Greg Hammann, Amanda Marchetti and Kumar Navulur, DigitalGlobe (www.digitalglobe.com), Longmont, Colo.

Proliferation of Earth observation sensors, including electro-optical, radar, light detection and ranging (LiDAR), sonar and other sensors, are being used from a variety of platforms”aerial, sea, space and terrestrial. Data fusion allows the combined power of different sensors to provide increased insight by leveraging different capabilities.

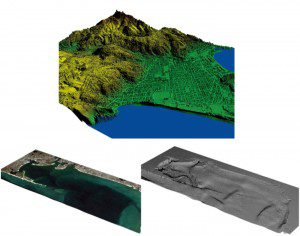

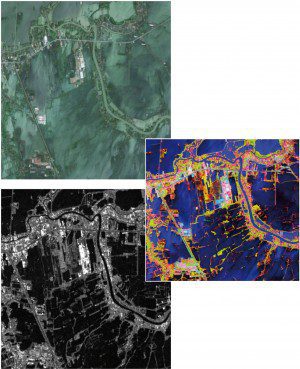

Fusing together many datasets, such as aerial LiDAR (top), electro-optical imagery (left) and sonar (right), allows users to develop new ways to analyze a situation or event that aren't possible with one sensor alone.

Data Sources

Ever since French balloonist Gaspard-Félix Tournachon took his first aerial photograph in 1858, a massive amount of information has been collected about Earth's surface and human activity. Now aerial and satellite electro-optical sensors collect a variety of data, from panchromatic, red-green-blue (RGB) natural color and multispectral to hyperspectral imagery. Data are collected at multiple spatial resolutions (pixel sizes) from unmanned aircraft systems (UASs), manned aircraft and satellites.

These sensors collectively capture billions of square kilometers of imagery every year, imaging the planet many times over. The data are used to derive information that tells us about the natural and human activities occurring on Earth such as changes to vegetative health, water quality, soil types and conditions, geology, human infrastructure, settlement locations and more.

Electro-optical sensors passively detect the energy from the sun reflected by materials on Earth's surface. Such sensors can only capture images during the day under clear skies. Spaceborne imaging radar, such as synthetic aperture radar (SAR) and interferometric synthetic aperture SAR (IfSAR), are used to capture different information about Earth based more on a material's physical characteristics”moisture content, dielectric properties, surface roughness, angularity, linearity, etc. SAR instruments can collect data in the day and night under most weather conditions because they're active sensors, sending out pulses of radio energy and measuring the return time for each pulse.

LiDAR and sonar function similarly, but they transmit and receive different kinds of energy. LiDAR instruments transmit beams of focused light and are mounted on planes and land vehicles. Such instruments have become popular in recent years to generate 3-D building models, map building interiors, create elevation models and extract details about canopy structures. Sonar and echosounders send out pulses of sound and are used to derive details about the water column, the ocean bottom and sea floor topography.

Over time, each of these sensors can be used to map and monitor landscape changes, enabling change and anomaly detection and valuable insight for decision making. With these sensor systems collecting billions of observations every minute, geospatial data now are part of the geospatial industry's big data paradigm, and the industry is beginning to come to terms with how to extract insight from this vast volume of data in a smarter way through multisource data fusion and by merging such data with nonimage data. By fusing many datasets together in meaningful ways, new and innovative ways of analyzing a situation or event are available that aren't possible with one sensor alone.

Fusing Data from Multiple Sensors

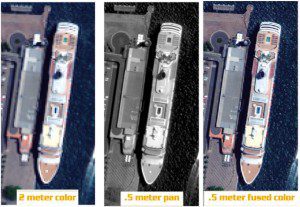

Pan sharpening involves fusing a low-resolution color image (left) with a higher-resolution panchromatic image (middle) to create a higher-resolution color image (right).

Data fusion brings two or more datasets together to create new data layers that optimize the benefits of individual sensors. In many cases, the sensors complement each other by filling in information gaps. As shown below, a common data fusion example is pan sharpening electro-optical imagery, a process that fuses color imagery collected at a lower spatial resolution with panchromatic imagery collected at a higher spatial resolution to produce a detailed high spatial resolution color image.

In addition, given the different phenomenology of the visible and radar portions of the electromagnetic spectrum, striking visualizations and analyses can be derived by fusing electro-optical and SAR imagery. Transformations, such as principal component analysis, and derived bands like texture measures can be added to the mix. For example, the GeoEye-1 and TerraSAR-X images below were collected over Ayutthaya, Thailand, during a flood in 2011.

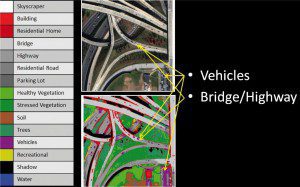

Land Cover Classification

Data fusion is an important tool for land cover classification and feature extraction. To classify an image, the most accurate approach requires training the algorithm as to which pixels represent specific materials on the ground. Imagery sources and corresponding derivatives are used to help the algorithm learn even the differences among similar materials.

A figure below shows a product that resulted from fusing a digital surface model with electro-optical imagery. Advanced algorithms and machine-learning systems are used for data mining and can find such differentiators. The key is having the most data available to allow for the greatest probability of an accurate classification.

Compared with using a single data source, data fusion of many data sources typically increases the classification accuracy. A fascinating example is found in the work by Dr. Douglas Comer, president of Cultural Site Research and Management Inc., who is working on an inventory of archaeological sites. Aerial and satellite images are fused to identify sites that are hidden from view and save them from potential destruction from future human activities.

Crowdsourcing

GeoEye-1 and TerraSAR-X images (top and bottom, respectively) show widespread 2011 flooding in Ayutthaya, Thailand. The end result shows a simple fusion product (inset), using TerraSAR-X for the red channel, the GeoEye-1 near-infrared band in the green channel and the GeoEye-1 red band for the blue channel in a false-color RGB composite.

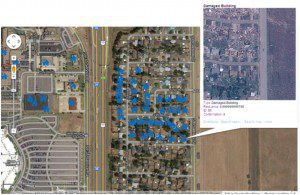

A recent trend in the geospatial industry is crowdsourcing, where citizens across the globe contribute local geospatial information. Crowdsourcing has been employed by various commercial agencies and governments to gather geo-spatial data at an unprecedented rate. Typical crowdsourcing applications include extracting road networks, identifying and annotating points of interest, assessing post-disaster damage and identifying specific features in large collections of images.

An image below shows a recent example of information submitted by the public”structures damaged during the tornado in Moore, Okla., on May 20, 2013. One of the major challenges of remote sensing is the need to rapidly analyze imagery and geospatial information. Crowdsourcing often is used to collect on-the-ground validation and to accelerate geospatial analysis as long as there are ways to rank the reliability of the submittals. For example, DigitalGlobe's Tomnod crowdsourcing systems (www.tomnod.com) offer geospatial analytics products that interpret terabytes of satellite images to deliver precision-located information.

Predictive Analytics

Data fusion from all available sources is critical to predictive analytics. A lot of insight is hidden within large datasets, so big data analyses can help ask and answer questions. Data-mining and machine-learning algorithms are important tools to determine patterns embedded in the data. Most animal and human activity has a geospatial component that can be modeled once the patterns are revealed. This method has been used to better understand animal movement patterns; predict where crimes are likely to occur; and optimize the use of valuable resources, such as game wardens or police officers.

A digital surface model can be fused with a color satellite image to help classify manmade and natural materials.

Insight from predictive analytics uses all-source geospatial intelligence, which combines geospatial raster and vector layers with knowledge of past events and an understanding of related cultural and human factors to model and predict the next event. The bottom image below is an example of a predictive model that combines variables, such as proximity to water, road infrastructure, soil conditions, terrain and surrounding vegetation, to predict the best areas for helicopter landings during forest fires. Instead of having to manually review and interpret all that information without help, field personnel use heat maps to show the results. Predictive analytics provides valuable, actionable intelligence on where an event was observed in the past and is likely to occur in the future.

Ongoing Advances

Homes damaged by a 2013 tornado in Oklahoma were identified using the Tomnod crowdsourcing system fused with pre- and post-event satellite imagery.

In short, data fusion, big data modeling, crowdsourcing and predictive analytics are being adopted quickly by the geospatial community. New infrastructure and capabilities continue to be developed to support such capabilities. Expect to see new innovative sensors on satellite, airborne, UAS and terrestrial platforms come to market; continued improvements in big data processing and analytics; and activity-based intelligence tools accessible in the cloud to further enhance tradecraft in the near future.