By Thomas Blaschke, Centre for Geoinformatics, University of Salzburg, Salzburg, Austria, and Bernd Resch, Research Studio iSPACE, Research Studios Austria, Salzburg.

Cities are complex systems composed of many interacting components that evolve over multiple spatio-temporal scales. Consequently, no single data source can satisfy the information needs required to map, monitor, model, and ultimately understand and manage our interaction within such urban systems.

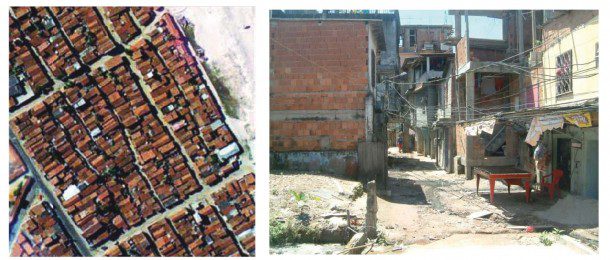

Aerial and satellite images reveal how cities change over time”in this case informal settlements in Fortaleza, Brazil”but a ground inspection reveals many structural and social changes that aren't depicted in nadir optical images. Click on images to enlarge.

Remote sensing provides a key data source for mapping such environments. However, to more fully understand them also requires a variety of related geospatial technologies, such as object-based image analysis and geographic information system (GIS) technology, as well as a variety of in-situ measurement systems, sensor webs and a holistic integration of these technologies.

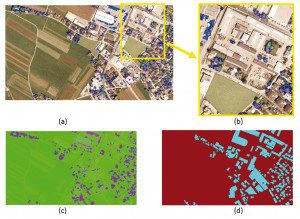

LiDAR and optical data are combined in many applications. In this case, (a) illustrates a DigitalGlobe QuickBird image of Salzburg overlaid with polygons representing tall trees located close to buildings that interfere with the extraction of building surface models from LiDAR data (not displayed here), (b) zooms to the problematic areas for visual inspection or subsequent image analysis steps, (c) shows an NDVI mask of tall trees derived from the QuickBird image, and (d) reveals the resulting building mask in 2-D. Click on images to enlarge.

Remote Sensing and the Urban Environment

The demand for timely urban mapping and monitoring has intensified due to increased access to high-resolution imagery and the need to better understand rapid urbanization and its accompanying environmental impacts. Satellite and airborne data analyses of urban landscapes include a wide variety of relevant applications, ranging from impervious surface mapping to urban change detection and classification.

Indeed, urban decision making increasingly requires land-use and land-cover maps generated from high-resolution data. For example, a remote sensing application to estimate population based on the number of dwellings of different housing types in an urban environment usually requires a pixel size ranging from about 0.25-5 meters if individual structures are to be identified.

Optical imagery, however, isn't the only data resource available to planners. Light detection and ranging (LiDAR) data derived from active sensors can provide detailed 3-D point clouds from which detailed building structural information can be defined. Recent breakthroughs in LiDAR flight path planning that emphasize building facade data capture have greatly facilitated the potential for rapidly auto-generating 3-D building models.

Image Analysis

Although remote sensing technology has been applied widely in urban land-use/land-cover classification and change detection, it's rare that a classification accuracy of greater than 80 percent can be achieved”except in the case of homogenous water bodies”using per-pixel classification algorithms.

Despite many innovative approaches and technical progress in sub-pixel analysis, unsolved issues involving spectral confusion and mixed pixels have led to a paradigm shift in classification methods from per-pixel to object-based methods.

Rather than treating the image as a collection of pixels to be classified on their individual spectral properties, the image pixels can be initially grouped into segments, and the object segments then can be classified according to spectral and other criteria, such as shape, size and relationship to neighboring objects. Analyst-based contextual information and experience also can be incorporated with the use of digital rule sets, similar to those developed for decision-tree classifiers.

GIS for Urban Analysis

Urban analysis requires converting remotely sensed imagery into tangible information for use with other data sets, often within GIS technology. Remotely sensed variables,GIS thematic layers and census data are three essential data sources for urban analyses. Because census data collected within spatial units can be stored as GIS attributes, census and remote sensing data can be combined with a GIS for numerous urban applications.

For example, remotely sensed imagery has been used to extract and update transportation networks and buildings, provide land use/cover data and biophysical attributes, and detect urban expansion. Census data have been used to improve image classification in urban areas. Moreover, remote sensing and census data have been integrated to estimate population and residential density, assess socioeconomic conditions and evaluate quality of life.

The Home Energy Assessment Technologies project (www.wasteheat.ca) uses ITRES high-resolution Thermal Airborne Broadband Imager data and geospatial analysis for home waste-heat monitoring. Click on image to enlarge.

In-situ Measurement Systems and Sensor Webs

With the advent of widely applied Open Geospatial Consortium (OGC) standards, there's now opportunity for a tighter integration of imagery and in-situ measurements. For example, during the last decade, sensor webs, which serve to gather measured data and combine them to generate an overall combined result, have become a rapidly emerging and increasingly ubiquitous technology for monitoring urban systems.

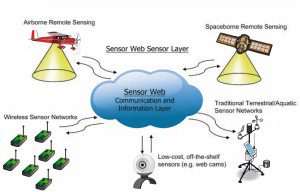

In contrast to typical sensor networks, sensor webs are characterized by three critical characteristics: interoperability characteristics, thus different types of sensors should be able to communicate with each other and produce a common output; scalability, which implies that new sensors can be easily added to an existing topology without changing the hardware and software infrastructure; and intelligence, which means the sensors can think autonomously to a certain degree, e.g., sending only a filtered subset of the total data as required by the user. These properties of geo-sensor webs are the basis for creating an Earth-spanning sensor network for continuous monitoring.

Such geo-sensor webs are a promising technology for current and future urban monitoring and modeling, thanks largely to the efforts of the OGC Sensor Web Enablement Initiative. With open standards and protocols for enhanced operability within and between multiple platforms and vendors, an all-encompassing Sensor Web can become the backbone of an intelligent communication infrastructure.

Collective Sensing

Each sensor or sensor network within a sensor Web typically samples only a tiny portion of its surrounding environment. Click on image to enlarge.

Urban planners and decision makers understandably want a complete picture and up-to-date information. Although remote sensing often provides the primary data source to achieve a birds-eye view of an urban setting, such traditionally static maps are increasingly inefficient for representing dynamic urban environments. Such demands require timely acquisition and analysis of spatial and temporal information for making informed decisions. Consequently, remote sensing can only be considered part of an information system that delivers a more complete picture of urban areas, their inhabitants and the resulting human-environment interactions.

At the personal level, location information, along with sensor information, may be increasingly used to coordinate and adjust plans on the fly and at a distance by receiving up-to-date information on the environment”e.g., transit schedules and traffic flow reports. Mainly in urban areas, sensor information is being more integrated, and the notion of sensorial city planning has evolved.

Additionally, the rapid growth in the deployment of smartphones points the way toward mass population mobile computing, networking and sensor technologies. This trend is supported by an

increasing availability of wireless networks, allowing for mobility beyond traditional laptop computers.

Beyond Remote Sensing

If a city is considered as a living entity, it's clear that the limited roof top or facade view provided by remote sensing needs to be supplemented by providing a more complete view of urban landscapes, integrating information from inside buildings, from under the canopy of trees and from anywhere within a 3-D urban space at relatively high temporal and spatial scales.

Generally speaking, fine-grained urban sensing, coupled with well-established remote sensing mechanisms, greatly enhances our knowledge of the environment by adding objective and invisible data layers in real time. These systems help us increase our capacity to observe and understand the city as well as the impacts on and by society.

It will be nearly impossible, as well as impractical, to obtain digital measurements for every point across an entire cityscape or landscape. Still, we're increasingly developing from a society with sparsely sampled footprints to a data-rich environment, enabling on-demand analyses of various urban activities and their constituents in space and time. The integration of remote sensing and sensor webs within an OGC framework is expediting this urban reality.

Editor's Note: This is an adapted excerpt from Collective Sensing: Integrating Geospatial Technologies to Understand Urban Systems”An Overview, Remote Sensing (www.mdpi.com/journal/remotesensing).